April 14, 2026

·

6 min read

7 SEO tools review mistakes costing you clicks

A practical troubleshooter for fixing SEO tool reviews that don’t earn clicks—match search intent, write clickable titles, use meta space strategically, cover deal-breaker features, show real workflows, and compare tools fairly.

Your SEO tool review can be accurate and still lose the click. Most misses happen before anyone reads your verdict: the page targets the wrong intent, the title blends into the SERP, or the snippet wastes precious characters.

This troubleshooter helps you diagnose what’s holding your review back and fix it fast. You’ll learn the signals that your angle is wrong, the title patterns that win attention, what to put (and cut) in metas, how to cover features without bloat, and how to compare tools in a way readers trust.

Wrong Search Intent

You can write a solid SEO tool review and still lose clicks if you target the wrong job. If the query wants “best for ecommerce,” your “full review” reads like the wrong answer.

Intent mismatch signals

Your analytics will tell you when the SERP wanted a different page than you published.

- High impressions, low CTR on review keywords

- Short dwell time on tool pages

- Bounce back to comparison queries

Treat these like a mislabelled shelf. Users leave fast because they came for a different product.

Fix the angle fast

You can correct intent without retesting the tool.

- Identify the dominant SERP format for the query.

- Match the query stage: research, shortlist, or decision.

- Rewrite the title and meta to promise the SERP’s outcome.

Align the promise first. The content can catch up after the clicks return—especially if you’re following a clear SEO guide for search intent to map queries to the right page type.

Example intent rewrites

Keep the same testing notes, but change the frame users actually want to click. A post titled “Ahrefs Review” can become “Ahrefs: Best for Competitor Research?” or “Ahrefs vs Semrush for Keyword Gaps,” with the same screenshots and findings.

You’re not changing the tool. You’re changing the question you answer.

Unclickable Titles

Even with decent rankings, a weak title can bleed clicks. In SEO tool reviews, the title is your promise, and vague promises get ignored.

| Weak title | Why it fails | Compelling alternative | What it signals |

|---|---|---|---|

| SEO Tools Review | No hook, no buyer | Best SEO Tools (2026): Tested | Fresh, evidence-based |

| Ahrefs Review | Says nothing new | Ahrefs Review: Worth $129/mo? | Pricing decision help |

| Semrush vs Ahrefs | Overdone, no angle | Semrush vs Ahrefs: For Agencies | Clear audience fit |

| Best SEO Tool | Generic, untrusted | Best SEO Tool for Beginners | Specific use case |

| Top SEO Tools List | Feels lazy, thin | 7 SEO Tools I Actually Use | Real-world credibility |

If your title can’t answer “for who” or “for what decision,” your CTR won’t recover.

Meta Descriptions That Waste Space

You have 155-ish characters to earn the click. Most tool review meta descriptions spend them on filler.

- “Best SEO tool” with no unique proof

- “In-depth review” without a takeaway

- Feature laundry list with no outcome

- Vague praise like “powerful” or “easy”

- No audience fit or use case

Swap fluff for specifics people scan for: price, best-for, standout test result, and a clear comparison angle. If you can’t name a differentiator, you don’t have a snippet yet. (See Google’s guidance on how to write meta descriptions.)

Thin Feature Coverage

Thin coverage kills trust fast.

If your review dodges “Can it do X?” readers bounce to the next result.

Missing deal-breakers

Readers scan for constraints before benefits because constraints decide fit.

Leave them out and your review reads like affiliate fluff.

- Pricing tiers and upgrade triggers

- Usage limits and throttling rules

- Integrations and export formats

- Data sources for each metric

- Update frequency for key reports

If you can’t answer these, you’re not reviewing the tool.

Coverage checklist

Use a repeatable checklist so every tool gets the same scrutiny.

It keeps you honest, even when the UI is confusing.

- Map buyer questions by role and budget.

- Build a feature matrix across the top competitors.

- Test each claim inside the product, not on marketing pages.

- Add screenshots plus the exact settings path.

The settings path is your proof, and proof earns clicks.

Depth without bloat

You don’t need every toggle to sound credible.

You need the 5–7 workflows your reader actually buys for, like “find keyword gaps” or “audit Core Web Vitals.”

Pick critical workflows, then show inputs, outputs, and one real constraint per workflow.

That’s how your review stays scannable while still feeling tested.

No Real-World Workflow

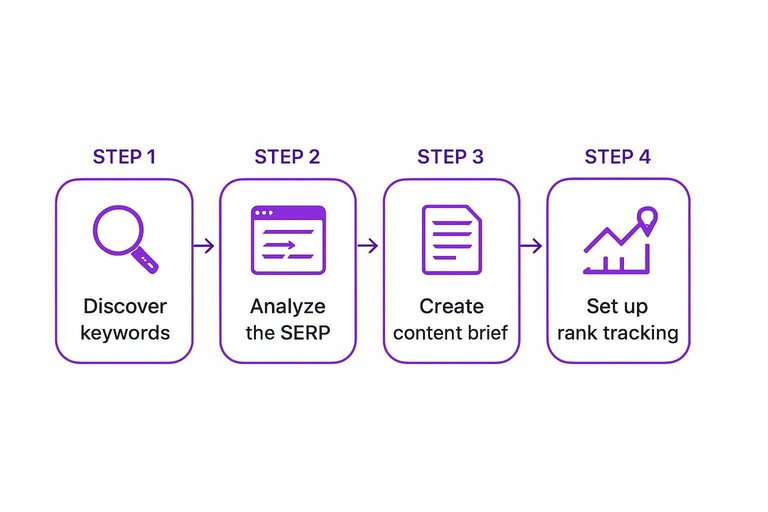

A tool can “sound powerful” and still fail the first time you try it on Monday morning. Build your review around a repeatable workflow readers can copy, like “find a keyword, ship a brief, track results.”

Workflow to demo

Show one end-to-end run so readers can map it to their own project, not your opinions.

- Discover keywords: pull 50 ideas, filter by intent, save a short list.

- Analyze the SERP: note page types, angles, and obvious gaps in top results.

- Create a content brief: export headings, questions, and internal links to include.

- Set up rank tracking: add targets, locations, devices, and competitors.

- Report outcomes: export a weekly snapshot and annotate what changed.

If your tool can’t complete this loop, it’s a feature tour, not a review. (Google also recommends demonstrating first-hand evidence in high quality reviews.)

Proof readers trust

Readers trust artifacts they can inspect, not adjectives like “robust” or “easy.”

- Sample exports: CSV, PDF, or share links

- Exact settings used: locations, language, device

- Before/after screenshots: SERP, rankings, traffic

- Limitations hit: caps, delays, missing data

- Workarounds used: manual steps, extra tools

When you show your receipts, skepticism turns into action.

Make it replicable

Give prerequisites and setup, or your workflow becomes a magic trick. Include the project baseline, like “new domain” versus “aged site,” plus any required accounts, integrations, and tracked keywords.

Add time estimates for each stage, like “15 minutes to build a brief” or “7 days to see ranking movement.” That lets readers predict effort, not just outcomes.

Make the path copyable, and you stop losing clicks to people who bounce back to the SERP.

Unfair Comparisons

If your tool comparison feels like a fight, readers feel it too. The fastest way to lose clicks is a “Tool A destroys Tool B” claim built on uneven conditions.

Common comparison traps

Bad comparisons don’t look dishonest at first. They just stack the deck in quiet ways.

- Comparing Free vs Pro tiers

- Testing on different keyword sets

- Highlighting only “winning” features

- Skipping learning curve and support

You’ll see the same pitfalls show up in AI content platforms compared today when the inputs aren’t held constant across tools.

Apples-to-apples method

You need a repeatable setup your readers could copy. Consistency beats cleverness.

- Match plans by price or tier level.

- Use the same sites and keywords.

- Score identical criteria with weights.

- State what you didn’t test.

That disclosure line is your credibility anchor, not a legal footnote.

When to recommend both

Sometimes the honest answer is “it depends,” but you must make it useful. Position tools by job, budget, or team reality, like “Tool A for solo audits, Tool B for agency reporting.”

You protect trust by narrowing the claim, then giving a clean handoff to the right buyer.

Run a 10-Minute Fix Pass Before You Publish

- Confirm intent: identify the SERP’s dominant format (“best,” “review,” “vs,” “pricing”) and rewrite your intro/H1 to match it.

- Stress-test your title: make the outcome clear (who it’s for + what it solves) and add a differentiator (data, workflow, or constraint).

- Tighten the meta: lead with the primary promise, name 1–2 proof points, and remove filler (no repeated brand names or generic adjectives).

- Patch feature coverage: add the deal-breakers your buyer cares about (limits, data sources, reporting, integrations, pricing gotchas) before adding more “nice-to-haves.”

- Show a real workflow: demonstrate one repeatable task end-to-end (setup → action → output), with screenshots or exact steps.

- Re-check fairness: compare equivalent plans/settings, disclose assumptions, and recommend both when trade-offs are legitimate.

Frequently Asked Questions

- Does an SEO tools review still influence rankings and clicks in 2026, or are AI summaries replacing them?

- SEO tools reviews still drive clicks because buyers want proof, screenshots, pricing context, and hands-on results that AI summaries usually don’t provide. Reviews that add original testing and clear recommendations are most likely to earn traffic from both search and AI-driven results.

- How do I measure whether my SEO tools review is actually increasing clicks?

- Track Google Search Console impressions, CTR, and average position for your review keywords, then compare a 28-day period before vs. after changes. For deeper insight, add GA4 events for outbound affiliate clicks and table/button clicks to see what sections drive action.

- How long does it take for updates to an SEO tools review to improve CTR and rankings?

- CTR changes can show up within 3 to 14 days once Google recrawls and displays your new title/meta, while ranking movement usually takes 4 to 8 weeks. Speed depends on crawl frequency, competition, and how substantial the update is.

- Can I publish a credible SEO tools review if I don’t have access to every paid plan?

- Yes—test the free trial or entry plan, document what you verified hands-on, and clearly label features you didn’t test. Supplement with official docs, changelogs, and pricing pages, but avoid presenting untested features as confirmed.

- Should I use schema markup for an SEO tools review, like Product, Review, or FAQ schema?

- Often yes: use Product (or SoftwareApplication) schema for the tool, plus Pros/Cons and pricing where applicable, and add FAQ schema for common questions on the page. Avoid fake star ratings unless they reflect a real, verifiable scoring system and comply with Google’s review rich results guidelines.

Publish Better SEO Reviews

Fixing intent, titles, snippets, and comparisons is straightforward on paper—but producing consistent, workflow-driven SEO tools reviews at scale is where most teams stall.

Skribra generates SEO-optimized review content with strong titles, meta descriptions, and structured feature coverage, then publishes to WordPress—try the 3-Day Free Trial to keep your pipeline moving.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: