March 19, 2026

·

14 min read

Advanced On Page SEO Optimization for Enterprise Sites

An advanced pillar guide to enterprise on-page SEO optimization that works within real governance and template constraints—engineer crawl budget, control indexation at scale, leverage information architecture, and systematize snippets, structured data, differentiation, and Core Web Vitals tradeoffs.

If your site has millions of URLs, “fix the meta titles” isn’t a strategy—it’s a risk. One change can ripple through templates, create index bloat, or tank performance across entire sections before anyone approves a rollback.

This guide shows you how to optimize on-page SEO like an enterprise: make reversible template-first improvements, use logs to direct crawl budget, build an indexation control plane, route link equity with intentional IA, and run repeatable systems for snippets, schema, content differentiation, and Core Web Vitals.

Enterprise constraints

Enterprise on-page SEO is less about clever tags and more about operating inside real governance. Your “advanced” work is whatever survives approvals, ships on release trains, and scales across thousands of URLs. Think “repeatable changes with predictable risk,” not “one perfect page.”

Governance realities

Approvals and release trains decide your SEO backlog faster than any audit tool. When brand says “no,” legal wants footnotes, and engineering ships quarterly, the game changes.

Map SEO tasks to the workflow you actually have:

- Tie each change to an owner: brand, legal, product, or platform.

- Write acceptance criteria like “no layout shift” or “no new claims.”

- Bundle SEO into existing ceremonies: sprint planning, CAB, release notes.

- Use templates and components to avoid one-off exceptions.

“Advanced” is getting the change approved, shipped, and repeatable without political debt.

Template-first thinking

On enterprise sites, the highest ROI work is rarely per-URL. You win by fixing the template that generates the problem.

- Rank page types by traffic, indexation, and revenue exposure.

- Estimate lift per template, not per page.

- Prioritize shared components: headers, breadcrumbs, schema blocks.

- Track coverage: “X templates touch Y% of organic landings.”

- Ship defaults, then allow overrides only when justified.

If one commit fixes 50,000 pages, that’s not optimization. That’s leverage.

Risk and reversibility

Enterprise SEO fails when you can’t undo mistakes quickly. Build reversibility into every change.

- Add guardrails: tests for titles, canonicals, robots, and structured data.

- Ship behind feature flags for templates and rendering paths.

- Roll out by segment: one locale, one directory, one page type.

- Monitor regressions: crawl errors, index coverage, and ranking deltas.

- Keep rollback paths: previous templates, redirects, and config snapshots.

Your best migration plan is the one you can reverse before Google notices.

Mental model

Use a simple model to stay sane at scale: signals, surfaces, systems. Signals are what you emit in HTML, headers, and feeds, like “canonical,” “internal link,” or “Product schema.”

Surfaces are where those signals appear, like templates, components, and rendering layers. Systems are what distribute and validate them, like CMS rules, build pipelines, QA checks, and monitoring.

Fix systems to improve surfaces, so your signals stay correct across crawl, index, and rank.

Crawl budget engineering

Enterprise crawl budget is engineering, not luck. Your job is to keep bots out of infinite spaces while keeping users fully served.

Think “search results with 12 filters” versus “a million URL variants.” That gap is where rankings die quietly.

Facets and parameters

Set rules before you touch robots.txt, or you’ll whack-mole forever.

- Classify facets: “indexable” (demand) vs “utility” (navigation only).

- Block utility patterns in robots.txt, targeting parameter groups, not single values.

- Canonicalize allowed variants to the closest indexable parent or clean URL.

- Remove internal links to blocked variants, but keep on-page UX controls.

- Add static links only to indexable facet combos, from hubs and category copy.

You’re not “blocking bots.” You’re defining which combinations deserve a page.

For the underlying mechanics and pitfalls at scale, follow Google’s crawl budget guidance for large sites.

Internal crawl paths

Crawl depth is a design choice. You can shape it with a few structural moves.

- Build hub pages for top intents, not just taxonomy.

- Use pagination that links forward and backward, not endless “load more.”

- Add HTML sitemaps for long-tail categories and high-value templates.

- Reduce orphan pages with contextual modules like “related,” “compatible,” “popular.”

- Keep faceted navigation crawlable only where it’s index-worthy.

If Google needs five clicks to find revenue pages, you built a maze.

Log-driven decisions

Logs tell you what bots actually did, not what your crawler simulation guessed. Pull a week of access logs and make waste visible.

Track three metrics by template and directory: hit rate (200s on indexable pages), redirect density (3xx share), and error density (4xx/5xx share). Then chart crawl frequency against business value, so “/search?” doesn’t out-crawl “/category/”.

When a low-value template dominates bot hits, you’ve found your crawl budget leak—use this SEO guide for next steps to map fixes into a broader technical plan.

Bot handling nuance

Rate limiting is a scalpel. Use it wrong and you punish real users.

Return 429 only when you can serve a clear retry signal, and only for abusive bursts from specific agents or IP ranges. Avoid dynamic rendering as a default; it creates two realities to debug. Prefer differential caching: longer TTLs for bots on stable HTML, shorter TTLs for users where personalization matters.

The goal is simple: bots slow down without your conversion funnel even noticing.

Indexation control plane

At enterprise scale, indexation is a control plane, not a checklist. One wrong rule can flood Google with faceted junk, or hide revenue pages behind a “canonicalized” mistake. Treat canonicals, noindex, and sitemaps like production config, with tests and rollbacks.

Canonical at scale

Canonicals should express intent, not hope, especially with variants and parameters.

- Canonical variant pages to the primary, not the category

- Strip tracking parameters in canonicals and internal links

- Canonical cross-domain only with ownership and parity

- Enforce one rule per template, no exceptions

- Detect loops and chains in crawls

If canonicals disagree across templates, Google will pick for you.

Noindex strategy

Choose the lever based on the outcome you want: crawl control or index control.

- Use noindex, follow for thin pages you still want crawled.

- Use disallow only when crawl budget is the problem.

- Use URL removal for urgent, temporary takedowns.

- Block internal search and staging with auth, then noindex.

- Verify with GSC inspection and a controlled crawl sample.

Pick one primary control per URL type, or you will debug ghosts.

Duplicate clusters

Duplicates rarely arrive one-by-one; they arrive as clusters from templates, filters, and pagination. Build clusters using URL patterns plus content signatures, like title+H1+main text hashes, then score them by traffic, links, and conversions.

Set thresholds that force decisions, like “<50 organic visits in 90 days” or “<20% unique body text.” Then consolidate with canonicals and redirects, differentiate with unique inventory and copy, or prune with noindex.

Indexing diagnostics

GSC statuses are symptoms; the cause is usually your rules colliding. “Duplicate, Google chose different canonical” often means conflicting signals between canonicals, internal links, and sitemaps, like listing parameter URLs in the sitemap while canonicals point elsewhere.

Triangulate each pattern using three views: Coverage states, sitemap inclusion, and live URL inspection. When those disagree, your system is telling Google two stories, and Google is believing the louder one.

Information architecture leverage

On enterprise sites, IA is your relevance multiplier. The goal is discoverability that also concentrates authority, so “important” pages get found and reinforced.

Entity-first taxonomy

Model your taxonomy around entities and their attributes, not your org chart. Think “Running Shoes → Brand → Cushioning,” not “Products → Footwear → Stuff.”

Each node should map to one primary intent with matching signals: a stable URL, one H1, and copy that doesn’t drift into adjacent intents. If “/running-shoes/nike/” exists, don’t also make “/brands/nike-running/” compete for the same query space.

That alignment is how you stop cannibalization before it starts.

Link equity routing

Your internal links are routing rules, not decoration. Treat navigation and contextual links differently, on purpose.

- Keep global nav to top tasks and top categories

- Use contextual links to push money pages

- Reduce footer links to legal and utilities

- Avoid sitewide links to mid-value pages

- Standardize anchors to match primary intent

If everything links to everything, nothing ranks like it should.

Pagination and series

Pagination is where big sites quietly leak crawl budget. You want predictable sequencing, clean canonicals, and zero duplicate entry points.

For series, meet crawler expectations: a clear first page, stable URLs, and consistent internal links between neighbors. Avoid “page=1” variants and duplicate faceted states that create infinite near-copies, even when the UX feels harmless.

The win is fewer URLs competing for the same authority.

Orphan prevention

Orphans happen during launches, migrations, and “just ship it” content pushes.

- Generate a daily list of indexable URLs from your CMS and sitemaps.

- Crawl your site and count internal inlinks per indexable URL.

- Fail builds when any new URL has zero inlinks.

- Enforce a minimum threshold, like 3 inlinks per template type.

- Alert owners with the exact pages that should link in.

Make orphaning a broken contract, not a recurring cleanup.

SERP snippet optimization

Your snippet is your billboard. At enterprise scale, tiny wording choices compound into real revenue.

Treat titles, meta descriptions, and headings like a system, not a one-off rewrite. “Good enough” becomes “same as everyone” fast.

Title system design

You need titles you can generate safely across thousands of URLs. Otherwise, you’ll ship duplicates, weak query matching, or brand spam.

- Define 2–3 title formulas per template and intent.

- Set constraints: 45–60 chars, primary term first, brand last.

- Add uniqueness tokens: model, location, year, category, or modifier.

- Map query variants to slots, like [primary] + [modifier] + [entity].

- Validate in CI: length, duplicates, and missing required entities.

A title system turns “write better titles” into something your CMS can’t mess up.

Duplication detection

Enterprise sites don’t just duplicate titles. They create near-duplicates that compete, confuse intent, and flatten CTR.

- Cluster titles/metas with cosine or Jaccard similarity thresholds.

- Flag pairs above 0.85 similarity and shared primary term.

- Prioritize by impressions, not URL count.

- Escalate when two pages rank for the same query.

- Track cannibalization via overlapping query sets in GSC.

If two pages look interchangeable to you, Google will treat them like substitutes.

Heading hierarchy nuance

Headings are your on-page contract with intent. Your H1 should name the page’s primary entity and promise, like “Enterprise SSO Pricing.”

Use H2s to enumerate sub-intents and attributes, such as “Compliance,” “Integrations,” and “Deployment models.” Multiple H1s in component frameworks are fine if only one is exposed per rendered view, and the rest are demoted or hidden from the accessibility tree.

When headings match entities and tasks, your snippet feels inevitable, not “close enough.”

SERP testing loops

You can’t debate CTR into existence. You need controlled changes with guardrails, or you’ll chase noise.

Pick a stable page set, change only title or meta, and log the exact deploy date. Measure in GSC by page group and query class, then wait out the typical 7–21 day lag before calling winners.

Your edge comes from iteration speed, not one perfect rewrite.

Structured data at scale

Enterprise schema breaks when teams ship “just one more” template. You need components and data contracts, not page-by-page markup, because rich results punish inconsistency.

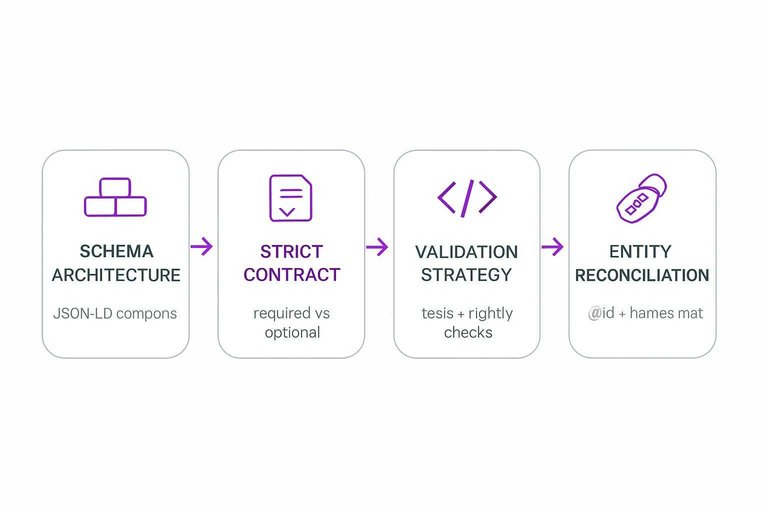

Schema architecture

Build JSON-LD like you build UI. Use componentized blocks (Product, Offer, BreadcrumbList) with shared helpers for dates, currency, and IDs, then compose them per template so a PDP and a category page never “argue” about the primary entity.

Define a strict contract per template: required fields, optional fields, and allowed schema types. If a page can be both Product and Article, pick one as primary and link the other via hasPart or mentions to prevent conflicting interpretations.

Eligibility edge cases

Rich result eligibility fails on edge cases, not the happy path. UGC and commerce data are where you usually cross the line.

- Exclude UGC reviews without identity and moderation signals

- Emit AggregateRating only with review count and visible reviews

- Suppress Offer when price or availability is unknown

- Block “always in stock” defaults for out-of-catalog items

- Avoid self-serving LocalBusiness reviews on owned pages

Your schema should degrade gracefully, because partial eligibility beats a policy-triggered wipeout.

Validation strategy

Treat schema like code, because it is code.

- Classify issues into “blocks rich results” vs “noise” per template.

- Add schema tests in CI using fixed fixtures and snapshots.

- Run Rich Results and Schema validators on key URLs nightly.

- Alert on rich result impression drops by template, not sitewide.

- Triage failures to the owning system: CMS, commerce, or reviews.

Warnings are fine until they correlate with volatility, then they’re production bugs.

Entity reconciliation

Schema has to agree with what your page claims in plain text. If your JSON-LD says “ACME Pro 2” but the H1 says “ACME Pro II,” you’ve created two entities, and Google will pick one.

Standardize identifiers across systems: stable @id URLs, consistent brand and product names, and breadcrumbs that match the rendered navigation. When multiple platforms contribute data, pick one canonical source of truth per field and enforce it at the contract boundary.

Content differentiation systems

Enterprise templates are efficiency machines. They also create “same-page syndrome” at scale.

Your job is to design repeatable uniqueness. Then you enforce it with gates, not heroics.

Template content slots

You need predefined places where uniqueness must exist. Otherwise, every page ships with the same filler.

- Intro slot: 120–200 words, intent-matched

- FAQ slot: 3–5 questions, pulled from query logs

- Comparison slot: “X vs Y” module, category-specific

- Editorial slot: expert note, opinionated and sourced

- Local/segment slot: audience-specific constraints and examples

Treat uniqueness like a requirement, not a suggestion. QA gets easier when the rules are explicit.

Programmatic SEO safeguards

Generated pages should earn the right to exist. Put checks in the pipeline before anything hits indexable URLs.

- Validate intent fit against a page-type keyword map.

- Require completeness for critical fields and attributes.

- Block publish if duplication crosses your similarity threshold.

- Enforce internal-link minimums from relevant hubs.

- Queue edge cases for human review, not auto-launch.

Stop bad pages upstream. Deindexing later is where budgets go to die.

Cannibalization control

Cannibalization is usually a clustering problem, not a “rankings” problem. Two pages answer the same query cluster, and Google rotates them like a slot machine.

Detect it by grouping queries into clusters, then mapping clusters to URLs. When two URLs share the same cluster, pick a winner and act.

Consolidate into one page when both are weak, retarget one page when intents differ, or adjust internal links to signal the primary. That’s the difference between coordination and coincidence.

E-E-A-T signals

Trust has to be templated, or it never scales. The easiest win is making “who wrote this” and “why you should believe it” impossible to miss.

Add author blocks with credentials, cite primary sources in consistent modules, and show “last updated” with what changed. Mirror it in schema so machines see the same story.

When every template ships proof, your best pages stop carrying the whole domain—and it compounds into daily SEO gains with AI when governance is consistent.

At scale, validate governance against Google’s structured data guidelines and policies.

Core Web Vitals tradeoffs

Enterprise performance work is never “just make it faster.” You’re juggling crawlability, personalization, and templates that ship weekly. Get the rendering and hydration wrong, and your pages look great in Lighthouse but leak rankings in production.

Rendering strategy choices

Pick a rendering mode per template, not per site, because “/product” and “/account” have different SEO and latency needs.

| Template type | Best default | Personalization need | SEO risk |

|---|---|---|---|

| Category / PLP | SSG or ISR | Low to medium | Low |

| Product / PDP | ISR + SSR edges | Medium | Medium |

| Editorial / Guides | SSG | Low | Lowest |

| Account / App | CSR + API | High | High |

| Search results | SSR or ISR | Medium to high | Medium |

Standardize the decision by template, then enforce it with routing rules and build budgets.

JS and hydration

Hydration is where good CWV scores go to die, especially with “helpful” widgets and tag managers.

- Split critical and non-critical JS, then ship only critical at first paint.

- Defer third-party widgets until interaction, not just

load. - Replace heavy components with HTML fallbacks for bots and no-JS.

- Audit hidden content, especially tabs and accordions, for indexability.

- Cap long tasks by chunking work and avoiding synchronous re-renders.

If your main thread is busy, Google and users both wait.

Media and fonts

Media is your fastest CWV win, and your easiest CLS trap.

- Enforce responsive images with

srcsetand correct sizes. - Serve via CDN with stable cache keys.

- Preload the true LCP image, not the hero container.

- Use

font-display: swapplus tight preloads. - Reserve space for embeds with fixed aspect ratios.

If the layout moves after “render,” you didn’t render; you guessed.

RUM-driven prioritization

Lab scores lie at enterprise scale because they average away your real pain. RUM shows you which templates, devices, and regions are failing, and why.

Slice RUM by page type and device class, then prioritize the worst-percentile template paths like “P75 mobile PDP on 4G.” Fix that cohort first, then re-measure before touching anything else.

Your roadmap should follow the slowest users, not the loudest dashboards.

Turn Optimization Into a Governed, Testable System

- Start with constraints: document governance, define what’s template-controlled vs. page-level, and set a rollback plan for every change.

- Instrument reality: pull log files and indexing diagnostics to identify wasted crawl paths, duplicate clusters, and parameter/facet hotspots.

- Build the control planes: standardize canonical/noindex rules, establish snippet/title generation with duplication detection, and deploy schema with validation and entity reconciliation.

- Roll out in phases: prioritize IA changes that route equity, add differentiation slots to templates, and ship CWV improvements based on RUM—not lab scores—then iterate with a steady SERP testing loop.

Frequently Asked Questions

- Does on page SEO optimization still matter in 2026 with Google’s AI Overviews and LLM-driven search?

- Yes—on page SEO optimization still drives rankings and visibility because Google uses on-page signals to understand entities, intent, and page quality. It also increases eligibility for rich results and improves snippet performance in AI-influenced SERPs.

- Do I need to update on page SEO optimization on every page, or should enterprise teams prioritize specific templates first?

- Prioritize the templates that generate the most organic traffic and revenue and those that produce the most indexable URLs (often PDP, category/PLP, and location templates). Fixing one template can improve thousands of pages faster than one-off optimizations.

- How do I measure on page SEO optimization improvements on an enterprise site without waiting months?

- Track leading indicators in 2–4 weeks: improved crawl stats (Search Console Crawl Stats), fewer index coverage issues, higher impressions/average position, and better CTR on updated templates. Validate at scale with log files, GSC URL Inspection sampling, and automated SERP snippet tests.

- Can I use AI to scale on page SEO optimization for titles, meta descriptions, and on-page copy without risking duplication or quality issues?

- Yes, usually by using structured inputs (product attributes, category facets, location data) and strict rules so outputs stay unique per URL. Add automated QA (similarity checks, length limits, forbidden terms) and human review for top templates to prevent brand and compliance errors.

- How long does on page SEO optimization take to show results on enterprise websites?

- Most enterprise sites see early movement in 4–8 weeks after release (crawl, indexation, and snippet changes) and clearer ranking gains in 8–16 weeks, depending on release cadence and how quickly Google recrawls key templates. Large template changes often compound over 1–2 quarters as coverage expands.

Operationalize Enterprise On-Page SEO

Enterprise on-page SEO optimization is only as strong as your ability to execute consistently across thousands of URLs while balancing crawl budget, indexation, and CWV tradeoffs.

Skribra generates and publishes SEO-optimized content at scale—with structured formatting, metadata, images, and WordPress integration—so your teams can sustain differentiation systems; start with the 3-Day Free Trial.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: