March 30, 2026

·

12 min read

Advanced Tools in SEO: How They Work Together

A pillar guide to building an advanced SEO toolchain that works as a system—not a pile of tools—covering sensing/decision/execution layers, high-signal data sources, entity+keyword systems, technical audit orchestration, backlog prioritization, automation patterns, and action-driven reporting.

If your SEO stack feels “busy” but your results don’t, the problem usually isn’t effort—it’s coordination. Tools collect data, dashboards multiply, and audits pile up, yet decisions stay slow and fixes ship inconsistently.

This pillar breaks down how advanced SEO tools are meant to work together: how data flows from sensing to decisions to execution, where to trust (and distrust) key data sources, and how to turn technical findings and content opportunities into a prioritized backlog and repeatable workflows that stakeholders actually act on.

Toolchain Mental Model

Treat your advanced SEO stack like a toolchain, not a toolbox. One layer senses reality, the next decides, and the last executes changes. The trick is that every output becomes an input upstream, like “logs → hypotheses → deploy → logs.”

Sensing Layer

Your sensing layer is observability for search, not reporting for slides. It exists to catch regressions early and expose what Google and users actually experienced.

Technical signals: crawl coverage, response codes, CWV, indexation deltas.

Content signals: query impressions, cannibalization, snippet swaps, SERP intent shifts.

Authority signals: link velocity, referring domains, brand mentions, competitor gaps.

When sensing is solid, arguments end quickly because you can point to a trace.

Decision Layer

Decision tools turn noisy signals into a ranked backlog you can defend. You want fewer “maybe” tickets and more bets with explicit confidence.

Clustering groups queries by intent so you stop shipping one-off pages.

Forecasting estimates impact ranges so you can compare apples to apples.

Prioritization models weight effort, risk, and upside with clear assumptions.

If you can’t attach confidence and cost, you’re not deciding, you’re guessing.

Execution Layer

Execution is where SEO becomes a delivery problem, not a research project. Your CMS, deployment pipeline, linking engine, and outreach system should make change cheap and reversible.

Ship changes through templates, components, and redirects you can audit.

Automate internal links with rules, then measure the blast radius.

Run outreach like a pipeline, with targets, states, and outcomes.

Close the loop with measurement and rollback, or you’re just accumulating SEO debt.

Integration Contracts

Integration contracts keep your stack joinable when tools disagree. Define the schemas once, then enforce them everywhere.

- Use a canonical URL key for every dataset.

- Classify page type with a shared taxonomy.

- Store query entity and intent labels.

- Timestamp all events in UTC.

- Version your rules and mappings.

Without contracts, your “insights” collapse the moment you change a crawler.

Data Sources That Matter

Every SEO tool is a lens, not a truth machine. Compare datasets on purpose, or you’ll optimize for one vendor’s worldview and miss the site’s real constraints.

Search Console Nuance

Search Console is your closest thing to “Google said so,” but it’s still a processed export. Treat it like an aggregated survey, not raw event data.

Sampling, query anonymization, and row limits skew the long tail. Build a page–query cube, then segment by device and country before you draw conclusions. Watch for aggregation traps like one URL variant absorbing metrics for many.

Analytics And Attribution

Analytics data is great for outcomes, but messy for causes. You need rules that keep SEO from getting blended into “everything.”

- Separate brand vs non-brand landing cohorts

- Split SEO from email and paid retargeting

- Use server-side tagging for resilience

- Model cookie loss with cohort decay

- Validate channel rules with log hits

If SEO “improves” only in attribution, you fixed reporting, not rankings.

Crawlers And Renderers

Crawlers tell you what a bot can fetch, and renderers tell you what a bot can execute. Mixing them up hides JavaScript failures behind “200 OK” comfort.

Compare raw HTML to rendered DOM for key templates. Catch SPA hydration gaps where titles, links, or canonicals appear only after JS. Hunt infinite scroll traps and JS-dependent links that disappear without user events.

Server Log Telemetry

Logs show what bots and users actually requested, not what tools assume. Use them to baseline crawl behavior and detect shifts before traffic drops.

Entity And Keyword Systems

You need a shared vocabulary for topics, entities, and intents, or your SEO tooling fights itself. When “CRM pricing” and “cost of Salesforce” live in different worlds, your briefs, links, and reporting drift.

Clustering Beyond Keywords

Keywords lie. SERPs and embeddings don’t.

Build clusters with two signals:

- Embeddings for semantic proximity

- SERP overlap for intent alignment

Then add hard constraints so clusters stay usable:

- Intent: informational vs commercial vs navigational

- Entity: product, person, place, feature

- Page type: hub, category, comparison, tutorial

Without constraints, you get one giant “marketing” blob that can’t ship.

Competitor Gap Graphs

Keyword gaps are flat. Graphs show structure and leverage.

- Map entities to competitor pages

- Group pages by template type

- Score coverage by intent depth

- Flag missing hub-to-leaf paths

- Spot “template wins” over copy wins

If they win by templates, your best writer can’t fix it alone.

Content Brief Automation

Brief automation saves time, but it can also produce “average SERP soup.” You want patterns, not clones.

Generate briefs from repeatable SERP signals:

- Common headings and section order

- Entities repeatedly co-mentioned

- Format expectations like “pricing table”

- Evidence types like “benchmarks” or “case study”

Add guardrails that force differentiation:

- One unique angle statement, in one sentence

- One proprietary example, screenshot, or dataset

- One “what others omit” section

Automation should set a floor, not a ceiling.

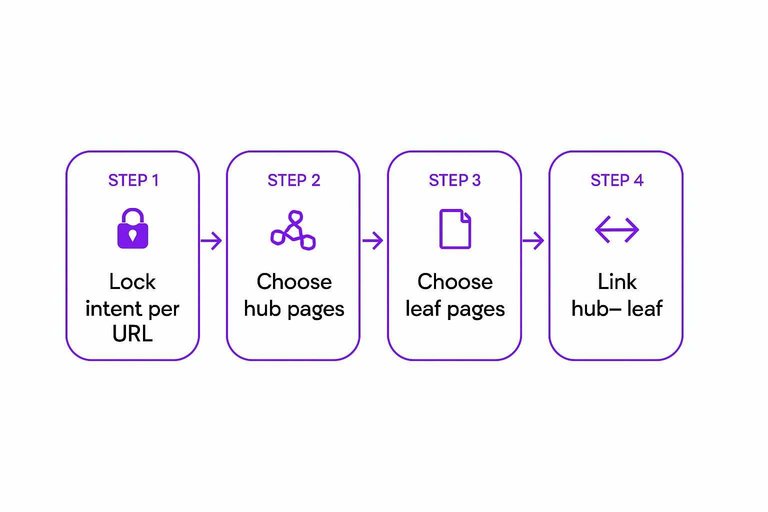

Internal Link Targets

Pick targets with data, not vibes.

- Define one intent per URL, then lock it.

- Choose hub pages by cluster centrality and conversions.

- Choose leaf pages by GSC impressions and low rank.

- Link hub→leaf for coverage, leaf→hub for consolidation.

- Recheck cannibalization after two crawl cycles.

Your internal links should behave like routing, not decoration.

Technical Audit Orchestration

Periodic audits miss the moment your site changes. You want a system that keeps crawling, rendering, and monitoring in sync, like a “CI pipeline for SEO.”

Run crawls on a schedule, render key templates after deploys, and watch production for drift. Then route every anomaly into a repeatable fix path—using a shared reference like this technical SEO guide to align teams on what “good” looks like.

Canonicalization Graphs

Canonicals behave like a network, not a checklist. Graphing them shows where authority leaks, especially after migrations or parameter changes like “?ref=partner.”

Model each URL as a node and each relationship as an edge:

- Edge types: canonical-to, redirect-to, hreflang-to, parameter-variant-of

- Path checks: loops, chains, dead-ends, multi-canonicals

- Node states: indexable, blocked, non-200, noindex, soft-404

- Risk patterns: split canonicals, orphan canonicals, cross-language mismaps

If you see loops or splits, you are not “optimizing.” You are losing votes.

Indexation Debug Playbooks

Indexation issues get solved faster when you triage by pipeline stage. Start with “discovered vs crawled vs indexed,” then attach the right fix class.

- Check discovered-but-not-crawled, then audit internal links and crawl budget drains.

- Check crawled-but-not-indexed, then test quality, duplication, and canonical targets.

- Check indexed-but-wrong-URL, then inspect redirects, canonicals, and parameter handling.

- Check indexed-but-broken-snippet, then validate rendering, structured data, and titles.

- Map each finding to a fix bucket: robots, quality, duplication, or rendering.

Your playbook is your SLA. Without it, every indexation bug becomes a debate.

Performance As SEO Signal

Core Web Vitals are a production signal, not a one-off report. Tie them to template commits so you can say, “LCP regressed after the new hero module.”

Separate lab from field and quantify your risk window:

- Lab: catch regressions in Lighthouse after deploys

- Field: confirm impact in CrUX or RUM by template segment

- Deltas: isolate JS, images, fonts, and third-party tags

- Window: track when rankings shift after sustained field degradation

Treat performance like uptime. Rankings often follow the trend, not the incident.

Change Detection Monitoring

Most technical SEO failures are silent config changes. Monitoring catches the “someone merged a robots tweak” moment before traffic drops.

- Alert on title rewrites across key templates

- Alert on robots.txt or meta robots changes

- Alert on internal link count drops by section

- Alert on structured data loss by type

- Alert on status-code spikes by pattern

When alerts are reliable, you stop auditing and start operating.

Backlog Prioritization Engine

Score SEO work like product work. You want fewer debates and more shipped wins, like choosing “fix canonicals” over “write 20 blogs.”

Score each item with impact, confidence, effort, and dependencies. Then let your tools auto-fill the inputs.

| Scoring input | What it measures | Typical tool inputs | Output you store |

|---|---|---|---|

| Impact | Traffic or revenue upside | GSC pages, GA4 value | Impact score 1–10 |

| Confidence | Likelihood you’re right | SERP tests, log files | Confidence 1–10 |

| Effort | Time and risk to ship | Jira history, dev sizing | Effort 1–10 |

| Dependencies | What blocks delivery | crawl graph, sitemaps | Blockers + edges |

| Priority | Best next work | ICE + dependency DAG | Ranked backlog |

When the dependency graph lights up, unblock first. That’s how “quick wins” stop being random.

Workflow Automation Patterns

You don’t need more insights. You need a pipeline that turns insights into shipped changes, safely.

Automation in SEO works when APIs, queues, and QA gates act like guardrails, not bureaucracy. If you’re looking for practical starting points, here are resources to simplify SEO workflows.

API-First Pipelines

Build your pipeline so every data pull is repeatable, incremental, and safe to rerun.

- Extract GSC, crawl, rank, and log data via APIs on a schedule.

- Transform into stable keys like URL, template, and query clusters.

- Load incrementally using cursor dates and partitioned tables.

- Run idempotent jobs so replays never double-count or overwrite incorrectly.

- Publish “ready” datasets to dashboards and ticket queues.

When reruns are boring, your shipping cadence gets aggressive.

QA Gates

Add checks before deploy so you catch regressions while they’re still cheap.

- Diff titles and meta for unexpected overwrites

- Validate canonicals and hreflang against URL rules

- Lint schema types and required properties

- Verify internal links and orphan thresholds

- Enforce CWV budgets per template

Fail the build, not the quarter.

Experiment Framework

Treat SEO changes like product changes, because traffic has noise and timing lies.

Use template-based A/B tests when you can randomize at page creation. Use cohort tests when you can’t, then add holdouts.

Control for seasonality with synthetic controls, like “similar pages that didn’t change.”

If you can’t name your control, you’re measuring vibes.

Follow Google’s guidance to minimize A/B testing impact in Google Search.

Governance And Access

Automation without governance creates “shadow SEO,” where changes happen outside your visibility.

Lock permissions to roles, log every change, and keep one source of truth for rules. Tool sprawl is fine until two tools rewrite the same canonical.

Your best defense is an audit trail you can query.

Reporting That Drives Action

Your tools will disagree. Your job is to make that disagreement useful.

A good report doesn’t “average the numbers.” It states what each system measures, then tells you what to do next.

Metric Reconciliation

Rank trackers, GSC, and analytics answer different questions, so treat them like witnesses, not judges. When you normalize the context, most “conflicts” turn into signal, like a tracker showing #3 while GSC shows low impressions.

Reconcile with a simple mapping:

- Rank tracker: position in a fixed geo/device SERP sample

- GSC: impressions and average position across real searches

- Analytics: sessions and revenue after the click

- Normalize: same country, device, time window, brand vs non-brand

- Classify queries: head, long-tail, local pack, SERP-feature heavy

Once the definitions line up, the remaining gap is your uncertainty budget, not an argument.

Anomaly Triage

When traffic drops, you need the shortest path to confirmatory evidence. Start with the layer that can fully explain the magnitude, then work down.

- Check demand: compare GSC impressions and Trends for top query groups.

- Check SERP shift: review winners/losers and feature changes for those queries.

- Check indexation: validate key URLs in GSC and sample with site: queries.

- Check crawl and rendering: inspect logs, crawl stats, and blocked resources.

- Check measurement: confirm analytics tagging, consent, and channel mapping changes.

You’re hunting for one “yes” that closes the case, not five “maybes.”

Opportunity Surfacing

Reports should surface actions that scale, not just insights. Look for repeatable patterns, like one template suddenly outperforming its peers.

- Flag “near-miss” queries ranking 4–15 with high impressions.

- Find pages gaining CTR after title or snippet changes.

- Cluster winners by template, intent, and SERP feature mix.

- Identify internal-link targets with high relevance and low link equity.

- Queue refresh candidates with decaying clicks and stable impressions.

If an opportunity can’t become a template, it’s probably a one-off distraction.

Narratives For Stakeholders

Stakeholders don’t need your crawl graph. They need risk, upside, and timing, like “we’re likely to recover 60–80% in 3–6 weeks.”

Use a narrative frame:

- Leading indicators: index coverage, impressions, top-page crawl rate

- Lagging indicators: sessions, conversions, revenue

- Confidence: best/base/worst case ranges, with assumptions stated

- Timeline: what changes now, what moves next, what proves it worked

When you quantify uncertainty, you earn permission to act before the quarter is gone.

Common Failure Modes

One bad integration can poison every report, then your automations “fix” the wrong thing. Use this table to spot stack-level pitfalls before they ship bad decisions.

| Failure mode | What you see | Root cause | Hardening move |

|---|---|---|---|

| Tag mismatch | Traffic drops “overnight” | UTM, channel mapping drift | Lock naming, validate weekly |

| Sampling creep | KPIs wobble by day | API limits, sampled exports | Use unsampled, widen windows |

| Entity duplication | Two URLs rank separately | Canonicals, hreflang conflicts | Enforce canonical rules |

| Automation overreach | Titles rewritten, CTR falls | Rules ignore intent, SERP | Guardrails, human approvals |

| Join-key break | Dashboards show zeros | IDs changed, schema update | Contract tests, alerts |

Treat every connector like production code, not a “quick plug-in,” and your stack stays trustworthy.

Build a Toolchain That Ships Wins

- Map every tool to the Sensing → Decision → Execution model, then define the “integration contracts” (owners, schemas, refresh cadence, and success metrics).

- Standardize your truth sources (GSC, analytics, crawls/rendering, logs) and reconcile metrics so the same issue doesn’t produce four conflicting stories.

- Convert insights into systems: entity/keyword clustering, technical playbooks, and a prioritization table that turns impact × effort × confidence into an ordered backlog.

- Automate delivery with API-first pipelines, QA gates, and change detection—then report anomalies and opportunities as decisions to make, not charts to admire.

Frequently Asked Questions

- Do I need an enterprise SEO platform, or can I build a stack of tools in SEO with smaller apps?

- Most teams can get 80–90% of the value with a modular stack (crawler + rank tracking + log analysis + analytics + automation) before paying for an enterprise suite. Enterprise platforms usually make sense once you need unified permissions, multi-site governance, and workflow at scale across dozens of stakeholders.

- How do I measure whether my tools in SEO are actually improving performance (not just producing reports)?

- Track leading indicators (crawl health, index coverage, internal link distribution, content updates shipped) alongside lagging outcomes (non-brand organic clicks, conversions, revenue) in GA4 + Search Console. If shipping velocity and technical/content KPIs don’t move within 4–8 weeks, your tool stack is generating insight without execution.

- How long does it take to integrate tools in SEO into a working system?

- A practical first version usually takes 2–4 weeks (data connections, dashboards, basic automation), and a reliable production-grade system takes 6–12 weeks with QA, alerting, and documentation. The fastest path is starting with one high-impact workflow (e.g., crawl → fixes → verification) before expanding.

- Can I use Google Search Console and GA4 instead of paid tools in SEO?

- You can cover fundamentals with Search Console + GA4 + a crawler like Screaming Frog, but you’ll often miss competitive insights, large-scale rank tracking, and robust backlink/keyword datasets. Paid tools become worthwhile when you need faster discovery, broader coverage, and repeatable monitoring across many pages and queries.

- What’s the best way to choose tools in SEO without getting locked into a vendor ecosystem?

- Prioritize tools with APIs, exportable raw data, and stable identifiers (URL, canonical, entity/topic IDs) so you can swap components without breaking workflows. Avoid stacks that only work inside one UI or hide sampling/modeling details that you can’t audit.

Unify Your SEO Toolchain

Even with the right tools in SEO, progress stalls when research, prioritization, writing, and publishing aren’t connected in one repeatable workflow.

Skribra turns insights into daily, SEO-optimized articles with WordPress publishing, workflow automation, and built-in backlink exchange—start with the 3-Day Free Trial.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: