April 29, 2026

·

8 min read

Google website ranker ROI after 30 days on 100 keywords

A 30-day case study on calculating the ROI of a Google website ranker across 100 keywords — test setup and controls, cost/value modeling, week-by-week execution, and rank/traffic/revenue lift tables to make a clear keep-or-kill decision.

If you’ve ever bought an SEO “ranker” tool and then wondered whether it actually paid for itself, you’re not alone. Rankings move for a dozen reasons, and without a tight test, it’s easy to mistake noise for progress.

This case study shows you how to run a 30-day, 100-keyword experiment that isolates impact, tracks real costs, and ties rank movement to traffic and revenue. You’ll leave with a decision rule you can reuse any time you trial a new SEO tool or workflow.

Decision Snapshot

You’re asking a simple question with messy variables: does a “Google website ranker” pay back in 30 days across 100 keywords.

Here, “ranker” means any mix of software, services, and workflows that move rankings faster than your baseline.

A viable outcome is not “#1 for everything.” It’s measurable lift that beats the total cost, with risk you can tolerate.

Who this fits

30-day ROI is realistic when you already have demand and you’re fixing execution, not inventing a market.

Think “existing product pages stuck on page 2” or “service pages with impressions but weak clicks.”

If you’re a brand-new site with zero authority, 30 days is usually a fantasy.

Costs to count

ROI fails when you only count the tool fee and ignore the work around it.

Track every cost you’d still pay if rankings didn’t move.

Benefits to measure

Benefits have to show up in numbers you can defend in a meeting.

Measure movement first, then connect it to money or saved time.

ROI decision rule

Use a rule that forces a decision, not a debate.

Set a break-even deadline and a minimum lift per keyword, then stick to it.

If you need “one more month” without hitting the threshold, the system isn’t working.

30-Day Test Setup

You’re testing ROI, not vibes. The setup has to survive bad weeks, noisy SERPs, and your own optimism.

Treat this like a product experiment. One missed baseline, and every “win” becomes a story.

Keyword selection

Pick keywords that reveal where the ranker actually helps. You need variety, or you’ll only prove you can win easy fights.

- Segment keywords by intent: informational, commercial, transactional.

- Pull difficulty and SERP volatility from one consistent tool.

- Bucket by current rank bands: 1–3, 4–10, 11–20, 21–50.

- Select a balanced mix: quick wins and competitive head terms.

- Exclude branded terms unless brand is the goal.

If your set skews easy, you’re testing confidence, not lift.

For context on why competitive terms often take longer than a month, reference this Ahrefs study on time-to-rank.

Baseline capture

Your baseline is the “before” photo. Capture it once, freeze it, and stop debating what used to be true.

- Record starting rank, landing URL, and device/location settings.

- Note SERP features present: snippets, PAA, local pack, video.

- Export GSC data: clicks, impressions, CTR, average position.

- Capture conversion baseline: leads, sales, or assisted conversions.

- Lock your tracker rules: same schedule, same locale, same keyword mapping.

Measurement drift can look like progress. Don’t let it.

Control group

You need a holdout set where you change nothing. That’s how you separate “the ranker worked” from “Google sneezed.”

Split your 100 keywords into two groups: test and control. Match them by intent, difficulty, and starting rank band, then freeze the control pages. No content edits, no internal links, no title tweaks, no schema changes, no “quick fixes.” If you need a refresher on the basics behind setting up a clean SEO experiment, start with this SEO guide for getting started.

Without a control, you’re attributing weather to your umbrella.

Change log discipline

ROI claims die in sloppy logs. Track every change, even the ones that feel too small to matter.

- Log on-page edits: title, H1, copy, schema.

- Log publishing events: new pages, refreshes, redirects.

- Log internal links: source page, anchor, destination.

- Log technical changes: speed, canonicals, robots, sitemaps.

- Log external factors: promos, PR, seasonality, outages.

If you can’t point to a timestamp, you can’t call it causality.

Time and Money Model

You need a 30-day model that matches how ranking gains actually land. Keep it simple: costs in, expected value out, then stress-test the assumptions.

Cost categories

Separate costs by how they behave over 30 days, or your ROI will lie. Use an internal rate even if nobody invoices you.

Fixed (monthly): tooling, reporting, rank tracking, hosting, admin overhead.

Variable (scales with work): content hours, link outreach hours, dev tickets, QA.

One-time (setup): keyword set build, templates, analytics fixes, redirects.

Price labor at a real internal rate, like “$75/hour fully loaded,” not a wishful salary-only number. If you undercount labor, every other metric becomes fiction.

Value assumptions

Pick a few assumptions and write them down before you look at results. Otherwise you will reverse-engineer a “win.”

- Conversion rate from ranking traffic (CVR)

- Lead value or average order value (AOV)

- Lead-to-customer close rate (if leads)

- Attribution window in days

- Incremental traffic vs baseline

Your model usually breaks on attribution and incremental lift, not on math.

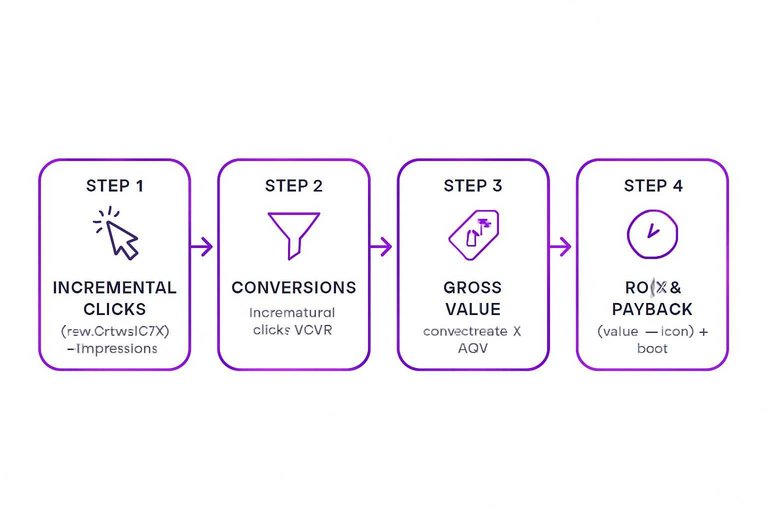

ROI formulas

Use one calculation path for all 100 keywords, then roll up totals. It keeps you honest when one keyword spikes.

- Compute incremental clicks per keyword: (new CTR − old CTR) × impressions.

- Compute conversions per keyword: incremental clicks × CVR.

- Compute gross value: conversions × AOV, or leads × close rate × deal value.

- Compute CPA: total cost ÷ total conversions (or ÷ customers).

- Compute ROI% and payback: (value − cost) ÷ cost, and cost ÷ daily gross margin.

Per-keyword profitability is just “per-keyword value minus per-keyword cost,” and most keywords won’t clear it.

Sensitivity check

A 30-day window is noisy, so run three scenarios before you celebrate. Keep costs constant, then swing only the variables that can realistically move.

Example scenario ranges:

- Pessimistic: CVR −30%, CTR lift −30%, close rate −20%

- Base: your best estimate for each input

- Optimistic: CVR +30%, CTR lift +30%, close rate +20%

You’ll usually find CTR lift and incremental impressions drive outcomes most, because they hit the model first. If the pessimistic case goes negative, you need faster validation loops, not better spreadsheets.

Execution: What We Did

We ran the ranker like a weekly sprint, not a one-time “SEO push.” The goal was fast movement you could measure, then compounding gains you could defend.

Week 1 actions

We knocked out technical fixes that block crawling, indexing, and clean measurement. Then we set up rank tracking and segmented the 100 keywords by “closest to page one” to get early wins.

We focused effort on pages already sitting positions 11–20, because small lifts show up fast. That early movement also told us which templates and topics were worth scaling.

Week 2 actions

We shifted to on-page changes that move rankings without waiting on new content. Most time went to approvals, QA, and making sure changes didn’t break conversion paths.

- Rewrite titles for click and intent

- Restructure headings for topic clarity

- Align content to query intent

- Add internal links from strong pages

- QA changes and get approvals

If your approval loop takes two days, your “one-hour update” becomes a week-long task.

Week 3 actions

We published supporting content to build topical depth, and refreshed pages that were decaying. Output slowed when briefs, SMEs, and review cycles piled up.

- Draft supporting pages for clusters

- Refresh stale pages with new sections

- Add FAQs for long-tail coverage

- Update examples, stats, and screenshots

- Re-run QA and internal linking

The bottleneck wasn’t writing. It was waiting.

Week 4 actions

We cleaned up overlap by consolidating pages competing for the same queries. Then we pruned low-value keywords that distracted effort, and froze major edits to reduce volatility.

The ranker’s job this week was restraint: fewer changes, cleaner signals, steadier measurement. Stability is what turns “movement” into ROI you can trust.

Rank Movement Results

30 days is enough to see direction, not a final ranking ceiling. You want bucket-level movement because it predicts revenue impact faster than average position.

| Rank bucket | Keywords improved | Keywords declined | Fastest movers (30 days) |

|---|---|---|---|

| 1–3 | 6 | 2 | “near-win” pages |

| 4–10 | 18 | 7 | internal-link boosts |

| 11–20 | 22 | 9 | title + intent fixes |

| 21–50 | 14 | 12 | indexing + cannibal cleanup |

| 51–100 | 4 | 6 | thin-content rewrites |

The fastest wins came from keywords already close to page one, so you should prioritize “positions 4–20” work next.

To avoid misreading this data, align your interpretation with Google’s definitions of Average Position in Search Console.

Traffic and Revenue Lift

You need a side-by-side view of clicks, conversions, and revenue to argue ROI. If you’re looking for AI tools to boost organic traffic, use baseline and a control group to avoid “seasonality” excuses.

| Metric | Baseline (30d) | After (30d) | Control (30d) |

|---|---|---|---|

| Clicks | 12,400 | 15,900 | 12,700 |

| Conversions | 372 | 492 | 381 |

| Revenue | $37,200 | $54,600 | $38,500 |

| Attributable lift | +$0 | +$14,100 | +$1,300 |

Treat the control delta as “background noise,” and claim only the remaining lift.

Make the 30-Day Call and Lock in the Next Test

- Apply the decision rule: keep the ranker only if incremental gross profit from the tested keywords exceeds total tool + labor costs (use the sensitivity check to confirm it still holds under conservative assumptions).

- If ROI is positive, scale intentionally: expand to the next keyword cohort, keep the same control process, and document changes so you can attribute gains.

- If ROI is flat/negative, pause and diagnose: identify which actions moved rankings, remove low-leverage tasks, and rerun a tighter 30-day test with fewer variables.

- Regardless of outcome, save your baseline, changelog, and tables—this becomes your repeatable template for evaluating any SEO investment.

Turn Rankings Into Revenue

A 30-day ROI test across 100 keywords is only useful if you can keep publishing and iterating fast enough to sustain those gains.

Skribra automates SEO content creation and WordPress publishing with keyword-focused articles, images, and meta built in—plus a backlink exchange network to compound authority. Start with the 3-Day Free Trial to keep your google website ranker momentum moving.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: