February 20, 2026

·

12 min read

How an AI SEO agency runs keyword research

An explainer on how an AI SEO agency runs keyword research end to end—turn inputs into expanded seed sets, classify intent from SERPs, score difficulty vs. opportunity, cluster into topics, and convert priorities into page maps and an operational workflow.

If your keyword research ends with a giant spreadsheet, you’re not doing research—you’re just collecting words. The hard part is translating messy signals (site data, SERPs, competitors, and risk constraints) into decisions you can publish and measure.

This explainer shows how an AI SEO agency does it systematically: what inputs it starts with, how it expands and cleans seeds, how it labels intent using SERPs, and how it scores and clusters keywords into topics. You’ll also see how those outputs become a prioritization matrix, page mapping plan, and a repeatable workflow.

Research Inputs

Keyword research is only as good as the inputs you feed it. An AI SEO agency treats inputs like constraints, because constraints decide what keywords even count as “good.”

Business primitives

You start with business facts, because “traffic” is not a goal. A keyword is only valuable when it matches your offer and your economics, like “emergency plumber Austin” versus “how to fix a leaky faucet.”

Turn primitives into constraints:

- Offer → problems you solve, in buyer language

- ICP → roles, industries, deal size, pain severity

- Margins → max CAC, payback window, LTV tolerance

- Geography → countries, cities, service radius, languages

- Seasonality → peak months, budget cycles, weather spikes

The tighter these are, the fewer keywords you regret ranking for.

Site evidence

Your site already contains demand signals and hard limits. You mine first-party data to see what Google thinks you deserve.

- Search Console queries and pages

- Analytics landing pages and paths

- Server logs and crawl patterns

- Internal search terms and refinements

- Index coverage and exclusions

If coverage is weak, fix indexation before you expand targets.

SERP environment

Keywords live inside SERPs, not spreadsheets. You profile the current results to estimate effort, timeline, and the real intent behind the query.

Capture the SERP environment:

- True competitors, not just category peers

- SERP features stealing clicks, like maps or AI overviews

- Content formats winning, like tools or listicles

- Intent volatility across days and devices

If the SERP is unstable, your “perfect” keyword may be a moving target.

Risk guardrails

Guardrails prevent rankings that create legal risk or wasted work. You define what the model must avoid, before it suggests anything.

- Flag YMYL topics and require expert review.

- Block brand-unsafe themes and prohibited claims.

- Apply compliance rules, like HIPAA, FINRA, or local ads law.

- Prevent cannibalization with one intent per URL.

- Set “no-target” lists for low-value or high-support queries.

The fastest keyword win is the one you never chase.

Seed Expansion Engine

Agencies win keyword research by building a huge candidate set, then making it clean enough to score. You want models for breadth, but you need deterministic sources for reality checks.

Seed generation

You need strong seeds or the model wanders into trivia. Seeds anchor intent around what you sell and what your pages already cover.

- Extract product nouns and modifiers from your catalog and pricing pages.

- List top pain points from sales calls, tickets, and reviews.

- Translate jobs-to-be-done into verbs plus objects, like “track invoices”.

- Pull H1s, titles, and internal search queries from existing pages.

- Tag each seed with audience, stage, and primary intent.

Bad seeds create impressive lists that never convert.

Multi-source expansion

One source always lies to you in its own way. Mixing sources gives coverage, intent variety, and a built-in bias check.

- Ask the LLM for intent variations and modifiers

- Pull autocomplete and “people also ask”

- Collect related searches and SERP suggestions

- Import competitor ranking keywords by URL

- Map category taxonomy nodes to queries

If two or more sources agree, you probably found a real demand pocket.

Normalization layer

Raw lists are noisy, and noise breaks scoring. You normalize so “best ai crm”, “AI CRMs”, and “best CRM with AI” don’t fight each other.

Canonicalize with a ruleset plus light entity resolution:

- Standardize spelling and casing, like “ecommerce” vs “e-commerce”.

- Normalize plurals and tense when intent stays identical.

- Resolve entities and aliases, like “GA4” vs “Google Analytics 4”.

- Apply locale rules, like “accountant” vs “chartered accountant”.

- Remove stopwords only when they don’t change intent.

Normalization is where your scoring stops being random.

Deduplication rules

Expansion creates near-duplicates that look different but behave the same. You cluster them so you score one idea, not fifty spellings.

- Embed each query and run cosine similarity clustering.

- Add string similarity checks for short queries and typos.

- Split clusters when SERPs differ by intent or page type.

- Keep the representative with highest volume, links, or rankings.

- Store the rest as synonyms for matching and reporting.

Your final list should be smaller than your ego, and stronger than your competitors.

Intent Classification

Intent is inferred from what Google rewards and how people phrase the query. When the SERP is full of “Top 10” lists, your product page won’t stick.

Intent drives both content shape and conversion fit. Rank with the wrong page type, and you’ll convert the wrong visitors.

Intent types

You classify intent by the job the searcher is trying to finish. The same keyword can carry two jobs, like “notion alternatives” which can be research or purchase-adjacent.

Informational queries want understanding, like “what is schema markup.” Commercial queries want options, like “best CRM for agencies.” Transactional queries want action, like “buy ahrefs” or “book a demo.” Navigational queries want a place, like “HubSpot login.”

Mixed intent is where money leaks. Treat it as a segmentation problem, not a category mistake.

SERP-as-label

The SERP already tells you what intent Google believes. You use those features as weak labels, then validate with traffic and conversion.

- Shopping ads, product grids, “Buy” buttons → transactional

- Listicles, “Top stories,” “People also ask” → informational

- Comparison pages, “Best,” review snippets → commercial

- Sitelinks, brand homepages, knowledge panels → navigational

If your target page type disagrees with the SERP, you’re fighting the grader.

Modifier signals

Modifiers are mechanical intent cues because they compress context into one word. “Best” often demands comparisons, while “pricing” demands numbers and terms.

“Near me” signals local pack eligibility and urgency. “Vs” signals evaluation, not education. “Pricing,” “cost,” and “quote” signal conversion readiness, even without a brand mentioned.

Modifiers don’t just change content. They change what you should measure—see this SEO guide for intent signals for how to translate those cues into page decisions.

Modifiers don’t just change content. They change what you should measure.

Ambiguity handling

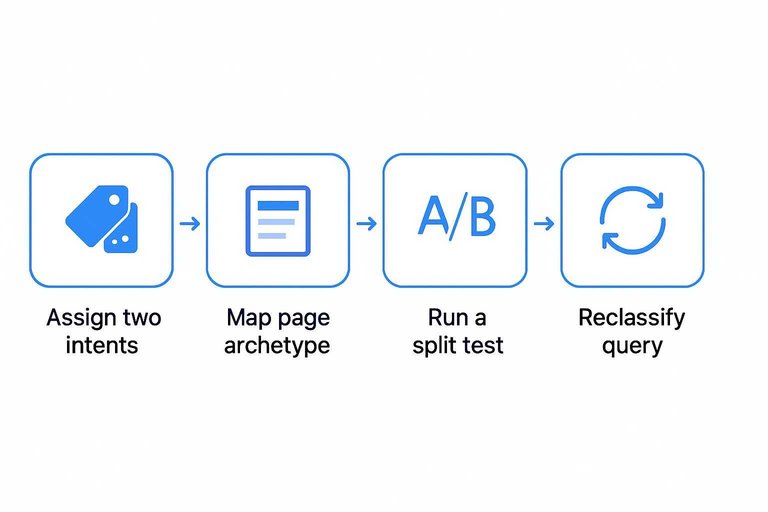

Some queries stay unclear even after SERP review. You treat those as multi-intent candidates until evidence collapses the options.

- Assign two intents with confidence scores, not one label.

- Map each intent to a page archetype you can actually ship.

- Check SERP stability across days and locations.

- Run a split test with two page types or modules.

- Keep the winner, and reclassify the query.

Ambiguity isn’t a blocker. It’s a prompt to test the page, not argue about the label.

Difficulty and Opportunity Scoring

Rankings correlate with three forces you can measure: authority, relevance, and the SERP’s shape. Authority decides if you’re allowed to compete, relevance decides if you deserve to compete, and SERP composition decides how many clicks exist to win.

If the page-one set is packed with brands, intent-matched formats, and sticky features, difficulty rises even when volume looks great. Score those forces consistently and your “hard” keywords stop being surprises.

Demand estimates

Search volume is a ceiling, not a forecast, so you estimate clicks instead of chasing ranges. You combine volume bands, position-based click curves, and SERP feature suppression to model what organic traffic is actually available.

Start with the midpoint of the volume range, then apply a click curve for your expected rank band. Reduce again for features that steal attention, like ads, local packs, and AI answers.

The number you want is “clicks you can capture,” not “searches people made.”

Competition signals

You score what’s already winning, because Google already told you what it trusts. The goal is to estimate your win-rate, not to argue with the SERP.

- Benchmark linking domains and anchor patterns

- Check topical authority across the whole domain

- Match the dominant content format and intent

- Verify freshness expectations and update cadence

- Note brand bias and SERP stability

When three signals stack against you, you’re buying a long timeline.

Gap detection

You look for competitor rankings you should own, then check if your site has a real home for them.

- Export competitor keywords, filtered to relevant intents.

- Cluster by offer, job-to-be-done, and funnel stage.

- Map clusters to existing URLs and note weak matches.

- Flag “no destination” clusters as net-new pages.

- Flag “thin destination” clusters as rebuild candidates.

A keyword gap is usually an information architecture gap wearing a keyword costume.

Composite scoring

Single metrics lie, so you blend four numbers into one: demand, win-rate, value, and effort. Each input gets a tunable weight, because a startup and an enterprise do not have the same constraints.

A common internal shape is: Priority = (Demand × Win-rate × Value) ÷ Effort, then weights adjust each term. You can overweight win-rate when you need quick proof, or overweight value when sales capacity is tight.

Tune the weights to match your business, then let the math be boring and consistent.

Clustering into Topics

A raw keyword list is just potential energy. Clustering turns it into a page plan that avoids “five pages targeting the same thing.”

Your goal is simple. One intent, one page, with supporting content around it.

Embedding clusters

Embeddings let you group keywords by meaning, not matching words. That keeps “best CRM for startups” close to “startup CRM software,” even with zero overlap.

You run each query through a vector model, then cluster by cosine similarity.

You validate clusters by checking SERP overlap, because meaning without intent is how thin pages happen.

If two queries share a SERP, treat them like one job.

SERP overlap test

Use SERP overlap when the embeddings look “maybe.” It’s the fastest way to confirm if Google thinks two queries are the same.

- Pull the top-10 organic URLs for query A.

- Pull the top-10 organic URLs for query B.

- Count shared URLs, ignoring sitelinks and tracking parameters.

- Decide: merge if 3+ overlap, split if 0–1 overlap.

- Sanity-check intent shifts like “template” vs “tool.”

If you see three or more shared URLs, you’re looking at one intent.

Canonical keyword choice

Each cluster needs one canonical query to steer the page. Without it, you’ll write a page that targets “everything” and ranks for nothing.

- Prefer the clearest intent signal.

- Prefer the highest CTR-shaped SERP.

- Prefer the query matching your product vocabulary.

- Prefer the query with linkable angle.

- Prefer the query with stable volume.

Pick the canonical keyword, then write the page title like you mean it.

Cannibalization prevention

Clusters only work if they map cleanly to URLs. Agencies assign a single “URL owner” per cluster, so two teams don’t publish competing pages.

They also set consolidation rules for legacy overlap.

Merge duplicates, 301 the weaker page, and keep one updated URL as the source of truth.

Coordination beats cleanup every time.

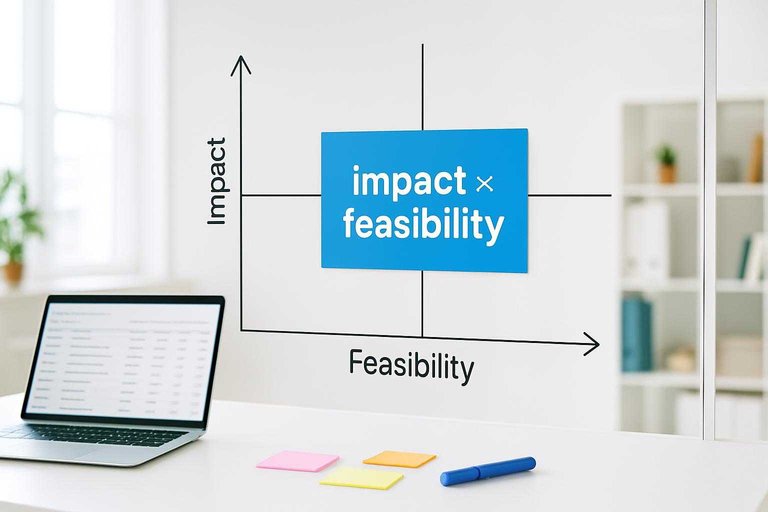

Prioritization Matrix

You need a way to choose between keyword clusters without defaulting to “highest volume wins.” A simple impact × feasibility matrix makes tradeoffs explicit and defensible.

| Cluster signal | Impact | Feasibility | Default move |

|---|---|---|---|

| High intent + weak SERP | High | Medium | Publish first |

| High intent + strong SERP | High | Low | Earn links, wait |

| Mid intent + easy SERP | Medium | High | Fill gaps fast |

| Low intent + hard SERP | Low | Low | Ignore for now |

| Strategic + low volume | Medium | Medium | Build pillar page |

You’re not picking keywords. You’re picking battles your site can win this quarter.

Page Mapping Blueprint

You need a one-to-one match between each keyword cluster and a page that can own it. Without that map, you get cannibalization, thin pages, and endless “quick fixes.”

- Assign each cluster to one primary URL candidate and one page type.

- Score the existing page against intent, depth, and internal-link fit.

- Decide the action: update, merge, create, or leave alone.

- Write a one-paragraph page brief: promise, sections, and primary entities.

- Set a review trigger based on rank volatility and content decay signals.

If two clusters want the same promise, you don’t need two pages. You need one better page.

Operational Workflow

Keyword research only works when it keeps changing with your market. AI gives you speed and coverage, while humans protect meaning, risk, and business fit.

Treat it like a living system. Not a spreadsheet you finish once.

Roles and reviews

AI drafts the first pass because breadth matters, fast. Strategists review because the word “best” can mean research, not purchase.

AI proposes assumptions, intent labels, and preliminary scores for every cluster. Strategists approve or override three things: the intent call, the business promise, and the final prioritization. That’s the line between “rankable” and “worth ranking for.”

Feedback loops

Use performance data to update what you believe, monthly.

- Pull rankings, clicks, conversions, and assisted conversions by URL.

- Detect SERP shifts: new features, new competitors, rewritten titles.

- Reweight scoring factors using outcomes, not opinions.

- Refresh clusters and intent labels, then flag broken mappings.

- Reprioritize the roadmap and queue new content tests.

If you don’t change the weights, you’re just collecting metrics.

Quality controls

AI moves fast, so your checks must be boring and strict.

- Flag hallucinated entities and unsupported claims.

- Detect brand conflicts, legal risks, and restricted terms.

- Merge or split duplicate clusters with overlapping SERPs.

- Catch intent vs page-type mismatches before briefs.

Fix the system first. Ideas are easy.

Reporting artifacts

You ship artifacts people can argue with, not vibes. A simple example: “We dropped ‘pricing’ keywords after low-converting demo traffic.”

Deliver four outputs: a keyword universe, a cluster map, a scoring rationale, and a changelog explaining priority moves. The changelog is the trust layer, because it shows your model learned something real—use an ultimate checklist for streamlining SEO content to keep those outputs consistent and audit-ready.

Turn the model into a repeatable system

AI-assisted keyword research works when every step produces an artifact you can audit: inputs and guardrails, an expanded and deduped universe, intent labels grounded in SERPs, and transparent opportunity scores. From there, clustering and cannibalization checks turn keywords into topic units, and the prioritization matrix and page map turn topic units into publishable decisions. Treat the workflow as a loop—ship pages, measure outcomes, feed wins and misses back into the next round—so your research gets sharper every sprint.

Frequently Asked Questions

- Does an AI SEO agency still use tools like Ahrefs, Semrush, or Google Search Console for keyword research?

- Yes—most AI SEO agencies combine LLM-driven discovery with tool data for validation, including Ahrefs/Semrush for SERP metrics and Google Search Console for real queries, impressions, and cannibalization signals.

- How do you measure whether an AI SEO agency’s keyword research actually worked?

- Track keyword-to-page outcomes: indexation and impressions in 2–4 weeks, ranking movement and CTR in 4–8 weeks, and qualified leads/revenue attribution in 8–16+ weeks using GA4, Search Console, and a rank tracker.

- How long does it take an AI SEO agency to complete keyword research for a new site?

- A typical first pass takes 3–10 business days for small to mid-size sites, while enterprise sites usually take 2–6 weeks due to approvals, analytics access, and larger content inventories.

- Can an AI SEO agency do keyword research without access to my analytics or Search Console?

- Yes, but results are usually less accurate because the agency can’t see existing query demand, page-level performance, or internal cannibalization; you’ll rely more on third-party SERP data and competitor signals.

- Is an AI SEO agency the same as using an SEO AI tool like Surfer, Clearscope, or ChatGPT?

- No—tools help with parts of the process, but an AI SEO agency adds strategy, QA, and implementation coordination across technical SEO, content planning, and performance measurement.

Turn Keywords Into Content

A solid AI SEO agency workflow is only valuable if it turns research, clustering, and prioritization into consistent, published pages your site can rank for.

Skribra converts your keyword research into daily SEO-optimized articles with WordPress publishing, images, and backlinks built in—start with the 3-Day Free Trial.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: