April 4, 2026

·

8 min read

How Website Domain Authority Is Calculated, Explained

An explainer on how website Domain Authority is calculated and why it changes—define what authority predicts (and doesn’t), unpack the link graph mechanics, explain crawling/canonicalization effects, outline modeled authority signals, and show how raw values become a calibrated logarithmic score.

Why did your Domain Authority drop even though you gained links—and why does a competitor with fewer backlinks sometimes outrank you? Authority scores can feel like a black box because the number you see is the final output of a much messier system underneath.

In this explainer, you’ll learn what authority is actually trying to predict, how link graphs propagate value, how crawl/index decisions and canonicalization change what “counts,” which signal groups models typically learn from, and why the final score is almost always logarithmic and calibrated.

Authority, Defined

Domain authority is a predictive ranking proxy, not a metric Google shows or updates. Treat it like a credit score for links: useful for comparisons, not a statement of truth. The reason you see different numbers is simple: every tool measures a different web and then scales it differently.

What it predicts

Domain authority predicts how likely your site is to rank compared to similar sites. It leans on link-based signals, then compresses them into a relative score on a curve.

Picture two SaaS blogs with similar content, but one has more strong referring domains. The model expects that one to win more often in search.

Use it to pick battles, not to declare victory.

What it is not

Domain authority gets misused because the number feels official. It isn’t, and it won’t tell you what Google “thinks” today.

- Not used by Google’s ranking systems

- Not a traffic or revenue score

- Not a penalty or spam verdict

- Not identical across providers

If you need a diagnosis, look at rankings and links, not the label.

Why scores vary

Authority scores vary because tools don’t see the same internet. Each vendor runs its own crawler, builds its own link graph, and trains its own model.

One index might find a strong industry directory link; another might miss it for months. Then both tools rescale results, so “40” in one world isn’t “40” in another.

Pick one tool for tracking, or you’ll argue with math.

The Link Graph Core

Domain Authority is a statistical score computed over a link graph, not a vibe check. Think of the web as a map where structure encodes trust and popularity, like citations in academic papers. When someone says “DA is about links,” they mean math on that map.

Nodes and edges

In the link graph, pages or domains are nodes, and hyperlinks are directed edges pointing from one node to another. Internal links create edges inside your own graph, while external links connect you to the wider web and bring in outside influence.

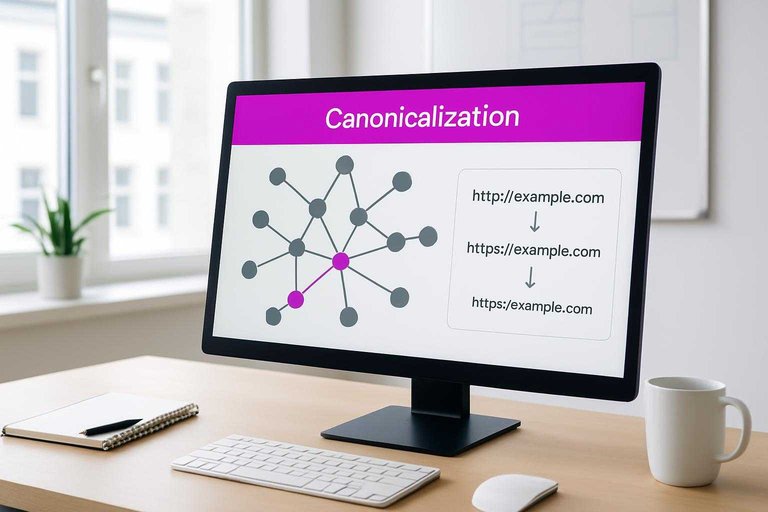

Canonicalization changes the graph you’re measured on, because duplicates split signals across multiple nodes. If your site resolves as both “http” and “https,” you just created parallel worlds. Fix the graph first. (See Google’s guidance on consolidating duplicate URLs.

Quality over quantity

More links help only when they act like credible endorsements. The model discounts edges that look cheap, irrelevant, or manipulated.

- Source strength from trusted, authoritative sites

- Relevance to your topic and neighbors

- Placement in-body, not footer clutter

- Follow vs nofollow and other link attributes

- Spam likelihood based on patterns

A thousand weak edges can still be a weak graph.

Propagation intuition

Authority “flows” through links like probability moving across edges, where each link passes a slice of credit onward. Multiple independent endorsements compound, because they create more paths into your node and reduce reliance on any single source.

Isolated clusters stagnate, since credit mostly circulates inside the bubble. If your best pages have no strong paths from the wider web, your ceiling stays low—so it can help to pair link-building with AI tools to boost organic traffic that improve discovery and attract natural endorsements.

Crawl and Canonicalize

Domain Authority starts as a dataset problem, not a math problem. Providers crawl pages, dedupe messy URLs, then group pages into domains that get scored. Think: “one page, one canonical URL, one domain bucket.”

Crawl coverage limits

No crawler sees the whole web because crawling is expensive and the web is evasive. Even big providers make tradeoffs that skew what they find and how often they update it.

Coverage usually breaks down along a few constraints:

- Crawl budget caps how many URLs get fetched per day.

- Discovery depends on links, sitemaps, and searchable surfaces.

- Robots.txt and noindex shut doors, sometimes by accident.

- JavaScript rendering costs more, so some pages stay invisible.

- Recency favors popular pages, so long-tail pages age out.

If your pages are hard to discover or render, they barely exist in the scoring universe.

URL normalization rules

Before scoring, providers try to decide which URLs are “the same page” and which are separate. Small differences can either merge equity or split it.

Common merges and splits:

- Merge http and https versions.

- Merge www and non-www hosts.

- Merge trailing slash variants.

- Split or merge URL parameters.

- Follow 301/302 redirect chains.

- Respect rel=canonical signals.

- Split subdomains from root.

If normalization disagrees with your setup, your link equity gets scattered.

Link index freshness

The link graph is moving even when your site is quiet. Providers refresh by re-crawling pages, dropping dead URLs, and adding newly discovered links.

A single re-crawl can flip a key linking page from “still linking” to “gone,” which changes your inbound counts. Another crawl might finally render a JavaScript page and discover links that were always there.

Your score can shift from index updates alone, so watch trends, not single-day spikes.

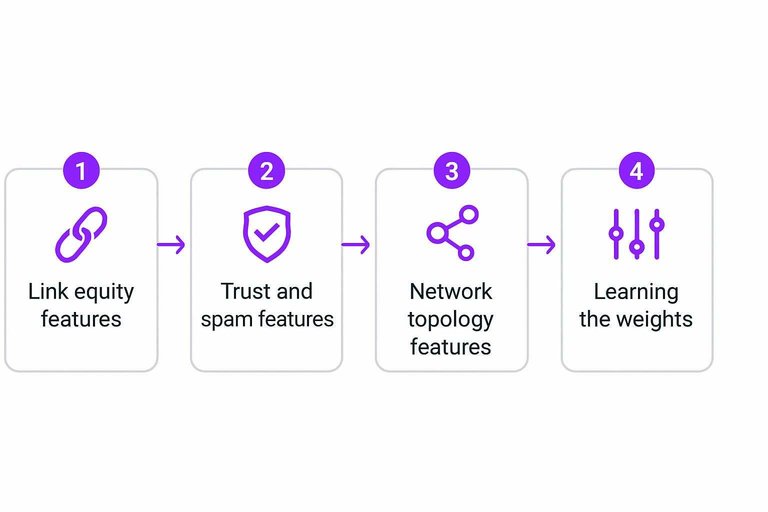

Modeling Authority Signals

Domain Authority-style scores come from features computed on a crawler’s link index, not from your analytics. Each feature tries to capture one idea: how much ranking power a domain can pass, and how reliably it earns it.

Link equity features

Raw counts are the starting point because they’re hard to fake at scale. Think “200 referring domains” versus “200 links from one directory.”

Weighted counts add realism by down-weighting low-value sources and repeated patterns.

Referring domains and unique IPs approximate independent endorsements, not one network’s push. Follow share estimates how much equity is allowed to flow. Link diversity spreads risk across pages, topics, and sources. Diminishing returns caps the lift from the 50th similar link, because the 5th already made the point.

If your growth comes from one tactic, the model treats it like one vote, not fifty. (Ahrefs explains this kind of diminishing returns from multiple links from the same domain.)

Trust and spam features

These features discount equity when the pattern looks manufactured or risky. They’re not “penalties” so much as skeptical math.

- Link-farm concentration across shared domains

- Unnatural anchor text repetition at scale

- Sitewide footer bursts from templates

- Poisoned neighborhoods of spam-adjacent sites

- Rapid churn in links and domains

Clean links matter, but stable patterns matter more than one-time spikes.

Network topology features

Graph metrics look past totals and ask where you sit in the web’s structure. A small site linked by “hubs” can outrank a bigger site linked by nobody important.

Centrality estimates how often your domain lies on important link paths. Distance to trusted seed sites acts like a credibility gradient, closer usually being safer. Reciprocity flags tight exchange loops, which can signal coordination. Community structure checks whether your links come from many clusters, not one clique.

When topology looks organic, authority becomes harder to break with one spammy burst.

Learning the weights

Most modern systems learn feature weights by predicting outcomes like “who ranks top 10” for a query set. The model adjusts until its authority signals align with observed SERP winners.

Weights shift because the web shifts. Local intent favors proximity and citations, while ecommerce favors brand mentions and category hubs. Mobile SERPs amplify different competitors and layouts, so the same link graph can map to different winners.

If your keyword set changes, your “best” authority profile changes with it—see this SEO guide for practical context.

From Raw to Score

Domain Authority starts as an internal number your tool can optimize, not a public score you can compare. Then it gets normalized and mapped onto a familiar scale, like “DA 0–100,” so humans can read it.

Raw score creation

The raw authority score is a continuous metric computed from many features, like linking root domains, link quality signals, and spam indicators. Think “model score 3.7” or “-1.2,” the kind of number a ranking model produces before anyone slaps a label on it.

It is not bounded, and it drifts as the web changes. It is also not directly comparable across different crawls, indexes, or vendors.

Treat it like an internal credit score. Useful for ordering pages, not for bragging rights.

Calibration steps

You need calibration so your score means the same thing tomorrow as it does today.

- Pick a reference set of domains across many niches and sizes.

- Fit a mapping from raw score to a target scale.

- Smooth outliers so one weird site does not warp the curve.

- Validate the mapped score against real ranking outcomes.

- Retrain periodically as link graphs and spam tactics shift.

If your reference set is weak, every “DA change” is just noise with confidence.

Why it’s logarithmic

Link signals are heavy-tailed. A few sites have absurd link equity, and most sites have little.

A linear scale would make the top end unreadable, because giants would blow past 100 fast. A logarithmic mapping compresses the extremes, so 20→30 is a smaller lift than 70→80.

That’s why “gaining 10 DA points” is not one thing. It depends where you start.

Use Authority Scores the Right Way

Treat Domain Authority as a relative, comparative indicator—not a guarantee of rankings or a KPI to chase in isolation. When it moves, look first at what changed in the link graph and index (lost links, newly discovered links, canonical shifts, crawl coverage, freshness), then evaluate link quality and topical relevance before blaming “the score.” Use it to benchmark against real SERP competitors, prioritize high-impact link and content efforts, and track progress over time in the context of your market, not across unrelated sites.

Frequently Asked Questions

- Why is my website domain authority different in Moz, Ahrefs, and Semrush?

- Because each tool uses its own crawl index, link discovery speed, and scoring model, the same site can get different authority scores. Compare trends over time within one tool rather than trying to “match” scores across platforms.

- How often does website domain authority update, and how long do changes take to show up?

- Most tools refresh domain authority on a rolling basis, typically weekly to monthly, depending on how often they recrawl your links. New links or lost links usually take 2 to 8 weeks to be reflected in the score.

- What’s a “good” website domain authority score for a new site versus an established site?

- New sites often start in the 1–20 range, while established brands commonly sit 40–80+, with 90+ usually reserved for major global platforms. The best benchmark is your direct SERP competitors for the keywords you want to rank for.

- How can I measure whether website domain authority is actually improving SEO results?

- Track domain authority alongside Google Search Console clicks/impressions and keyword rankings (e.g., via STAT, Semrush, or Ahrefs) to see if score gains align with organic growth. If DA rises but traffic and rankings don’t move in 8–12 weeks, the new links may not be relevant or strong enough.

- Can website domain authority go down even if I’m building more backlinks?

- Yes—your score can drop if you lose high-value links, the tool’s index recrawls and devalues certain links, or competitors gain authority faster. A small dip is common after major index updates and doesn’t always signal an SEO problem.

Build Authority With Consistency

Understanding how domain authority is calculated is useful, but improving it depends on publishing reliably and earning the right links over time.

Skribra generates daily SEO-optimized articles, publishes to WordPress, and taps a backlink exchange network to help lift your authority signals—start with the 3-Day Free Trial.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: