January 24, 2026

·

13 min read

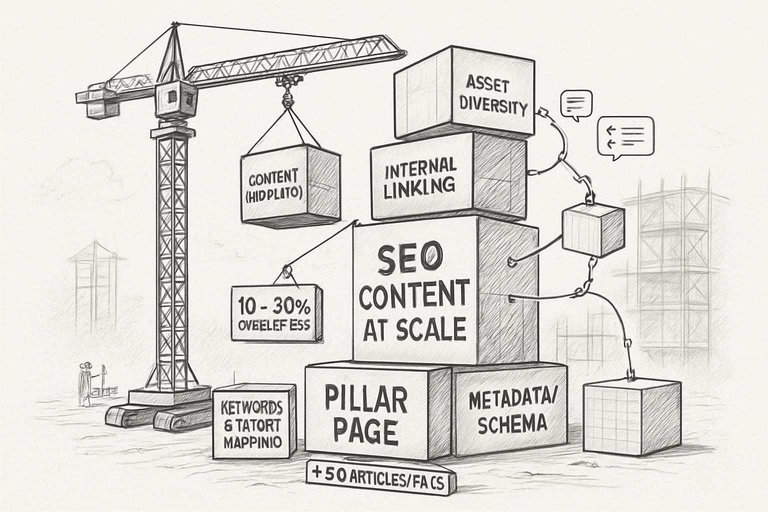

Core Building Blocks of SEO Content at Scale

Scaling SEO content is technical first, creative second. Without strict systems, teams run into self-cannibalization, missed ranking shots, and wasted cleanup hours.

Frameworks prevent messes you don’t want to untangle at scale.

Keywords and Intent Mapping

The keyword list has to be deep and intent-mapped—just plugging stuff in a spreadsheet doesn’t cut it. If you don’t clarify the purpose behind every page, you’ll stack articles on the same topic and cannibalize traffic.

If intent mapping is shallow, expect 10–20% of content to overlap, which is hard to clean up later. Build a living taxonomy and pit every brief against it before anyone writes.

Content Templates and Modular Structures

Strict templates are necessary, but real efficiency comes from modular blocks: reusable FAQs, reviews, tables. Without them, writers improvise and the library gets inconsistent.

But overbuilt templates slow production—if modules force in fluff, writers drop them. Templates should be fast to audit and tweak, ten minutes or less per page.

Content Asset Diversity (Types and Formats)

Blog posts alone won’t cover enough ground or answer all user questions.

- Every pillar page needs 5–10 related articles or FAQs

- Skipping supporting content leaves the pillar page stranded, even if it’s comprehensive

- Each format fills a different SEO gap

Miss one, a competitor takes that ground.

Internal Linking and Content Architecture

Letting linking grow on its own gets messy fast. Plan content clusters and force a checklist for every new page. Otherwise, money pages end up buried, invisible to users and Google.

Random links don’t help—too many, and each loses value. Keep links targeted and minimal.

Metadata Systems and Schema Integration

Manual metadata management doesn’t work at scale. Use scripts or CMS tools to set defaults and flag missing data.

Schema is tricky: plugins can break on custom layouts and wreck rich snippets. If automation can’t cover edge cases, good content won’t show up in search. Always audit automated output—blanket solutions miss details.

Process Workflows for Consistent SEO Content Production

If workflows are loose, mistakes spread fast. Each unclear step wastes hours per item. Tight, visible steps aren’t bureaucracy—they keep you from redoing whole batches or getting stuck in never-ending reviews. For an in-depth look at addressing process slowdowns, see this guide on fixing content bottlenecks with smart AI.

Brief Creation and Standardization

Standardized briefs are mandatory with 20+ writers. Allowing people to invent formats leads to missing must-haves—internal links, CTAs, etc.—only caught during late review. House all briefs in a shared doc and require a checklist. I update templates quarterly based on feedback from editors and SEOs; if not, templates go stale within six months.

Assigning Roles and Managing Teams

Writers shouldn’t edit themselves, and SEOs shouldn’t ghostwrite. Every role gets assigned in tracking tools like Asana or Jira. Without this, accountability tanks and projects stall for weeks over unclear reviews.

Rotate leads to avoid burnout—otherwise quality drops. All process steps should be documented so new hires aren’t lost for weeks.

Taxonomy of Content Quality Criteria at Scale

You need standardized, clear quality benchmarks when publishing at scale. Ad hoc checks don’t hold up past 50 pages—they create inconsistency and force you to waste budget fixing issues you could have prevented.

Below are the areas to define before ramping up output.

Baseline Quality Standards for Bulk Output

Ambiguity kills quality once you’re pushing 100+ articles a month. The most common failure: skipping minimum standards. That’s how stale or wrong data sits for years.

At minimum, demand 100% fact accuracy (verified and current), direct keyword-to-intent alignment (checked pre-publish), clear heading structure (H1-H3 required), and no template filler in intros or conclusions. Run every page through technical SEO QA: metadata, links, and mobile speed checks with something like Screaming Frog or Sitebulb.

Unlike checklists that only cover surface-level issues like grammar or broken links, this approach prioritizes issues that directly impact ongoing search performance. On big sites, missing just one area can tank search traffic sitewide.

Editorial Consistency Across Hundreds of Assets

If you don’t enforce a detailed editorial style guide, formatting and terminology break down fast—especially with a large team.

Not versioning or centralizing your editorial docs just guarantees conflicting guidance. Automated tools like Grammarly can spot basic grammar issues, but only editors catch brand voice and wording errors. Retrain your team quarterly or expect drift.

Depth vs. Breadth: Navigating Content Coverage Trade-offs

Trying to cover everything at once guarantees thin content somewhere. Google notices; whole keyword clusters can drop out of the top rankings.

Prioritize topics needing real depth with intent mapping tools, leave others for later. Content maps prevent overlap and wasted effort. Overlap cleanup after launch is a headache.

Managing Source Attribution and Citations at Volume

Letting each writer invent their own citation style makes a mess—especially when sources go dead or turn out unreliable later.

Lock down citation standards and require a reference check before publishing. Automate stats and repeatable sources, but only for standardized data; editors still need to review anything with nuance, or you risk credibility and legal blowback.

Automated Versus Manual Quality Control Layering

Automation catches surface-level problems: broken links, repeated keywords, missing metadata. But it won’t catch tone problems or unclear intent. Manual editorial review still finds 10–20% of issues; that’s the difference between passable and credible at scale.

Use automation for bulk checks, human editors for nuance. Both are required when you’re moving quickly. You can learn more about content quality evaluation frameworks that use multi-facet and counterfactual approaches to assess quality at scale.

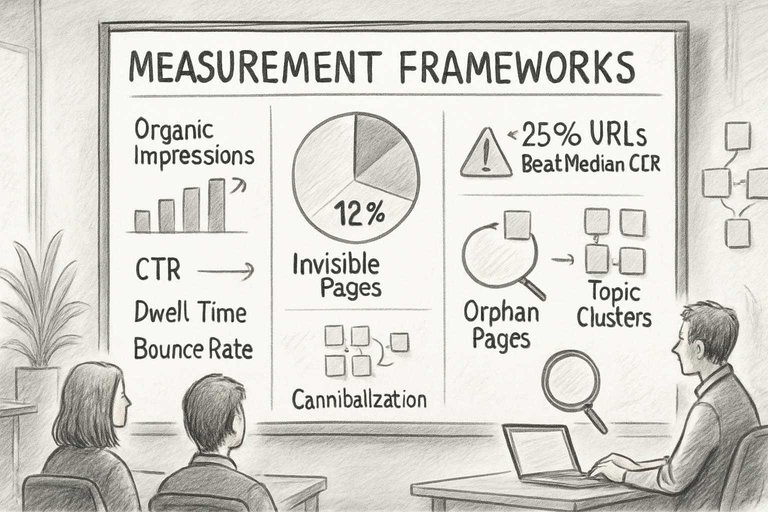

Measurement Frameworks: Gauging Scaled SEO Content Impact

Once you’re past a few dozen pages, number-driven tracking is required. Otherwise, you’re guessing based on vanity metrics or scattered anecdotes. For those interested in concrete demonstrations of these concepts, check out these real-world examples of automated SEO content to see measurement frameworks in action.

These are the performance areas you need to monitor to guide both strategy and daily decisions.

Core Performance Metrics (Visibility, Engagement)

If you don’t analyze data by topic cluster, you’re missing systemic issues. Aggregate performance hides underperformers, which then get ignored.

- Use Google Search Console groupings or Data Studio dashboards by topic.

- Track: organic impressions, rankings, CTR, dwell time, bounce.

- The real watchpoint: what percent of URLs beat your median CTR. Under 25% means scale is broken, not just a few weak pages.

Evaluating Topical Authority and Content Footprint

Without mapping URLs by topic and intent, you miss where your influence spreads and where you’re open to competitors. It’s a mistake to only look at traffic—blind to coverage gaps.

Inventory pages monthly to find orphans; on one audit, 12% of pages were invisible to users. Build both keyword coverage (breadth) and high rankings on meaningful terms (depth). Volume alone doesn’t move ranking.

Diagnosing Cannibalization and Overlap Across Assets

Ignoring cannibalization costs rankings. If multiple pages fight over the same keyword, you’re splitting SEO value.

Tools like Ahrefs handle most of the detection, but manual review is needed for intent mismatches. Too much consolidation can kill good landing pages, but inaction sinks rank all around.

Longitudinal Tracking and Benchmarking

Reporting occasionally misses turning points. Monthly dashboards (Looker, Tableau) covering keyword rank, YoY sessions, and cluster growth let you spot real trends.

Benchmark quarterly against competitors or you’ll miss stagnation. Track lag measures like conversions or revenue—not just surface engagement.

Attribution Models for Multi-Asset SEO Impact

Attribution is often messy. Credit given only to the last page visited misses all supporting content viewed earlier.

Multi-touch models in GA4 work if you have enough conversions; small numbers just create noise. Segment attribution by content cluster—sometimes authority comes from pages that never convert directly. This only works if URL grouping and goal tracking are clean; broken naming or data hygiene at scale wipes out model accuracy.

Scaling Pitfalls and Failure Patterns to Avoid

Scaling up SEO always shows weaknesses that never come up when teams are small.

What worked for 50 articles stops working at 500. Edge cases show up everywhere, QA becomes overwhelming, and old workflow gaps suddenly block progress.

Most common things to break under pressure:

When Process Outpaces Quality

As output ramps up, teams cut corners. Editorial checks get skipped or rushed. Fact-checking drops off fast. Quality scores fall 20% or more in just a quarter if no one’s tracking it. Automation and batching don’t make up for missed research and review.

Engagement and rankings drop, and by then the shortcuts are everywhere. Most guides suggest simply increasing headcount or content velocity to keep up, but this overlooks the cumulative erosion of standards that automation can accelerate if left unchecked.

Over-Templatization and Loss of Relevance

Heavy templating delivers speed, but the content gets padded and samey. Intros look identical, sections lose purpose, and the unique angles vanish. Google drops those URLs inside six months.

If the team can’t break the template when needed, content turns generic. Both readers and algorithms move on.

Neglected Maintenance and Decay

Content portfolios quickly balloon, then age out. Outdated stats, months-old 404s, and broken links pile up. Thirty percent or more of pages usually have fixable errors—someone just needs to own upkeep.

A year without a full audit and traffic starts sliding. Some posts lose almost all their value if they rely on old info. One way to avoid this is to implement enterprise SEO tactics that scale, like systematic audits and metadata strategies.

Team Fatigue and Onboarding Bottlenecks

Push teams past their limits and error rates double. New writers come on without proper onboarding, repeat mistakes, and burn out.

Updating onboarding before hiring is basic, but gets skipped. Ignore it and you’ll see productivity drop, morale tank, and churn increase.

Compliance Drift Across Expanding Content Sets

With a small team, standards hold. Add freelancers or scale, and alignment breaks. Branded disclaimers and needed phrasing disappear.

If compliance checks aren’t routine for both drafts and live content, you end up with risky pages and expensive, slow cleanup when audits hit.

Evaluation Models for Large-Scale SEO Content Programs

When you’re publishing hundreds of pieces a month, manual review always lags. Structured evaluations need to be built in from the start.

Ways to test SEO ops at scale:

Horizontal Audits Across Content Types

Audit by format—FAQs, buying guides, etc.—quarterly. This finds missing metas, misaligned CTAs, or author bio problems.

Skip this and template drift becomes permanent; user journeys fail.

Vertical Audits Within Topic Hubs

Audit silos one at a time. This turns up duplicate keywords and missing subtopics.

Works only if your audit tool pulls related URLs fast—without that, you’re blind to gaps or overlaps.

Sampling and Spot-Check Protocols

Don’t review everything. Use stratified sampling by content age and writing team.

Reviewing just the newest content misses bigger, systemic problems. Sampling has to be representative, or widespread errors go unchecked.

Stakeholder Feedback Systems

Internal audits miss what users notice. Set regular cross-team reviews.

Analytics can’t replace real feedback. Direct input is how recurring issues surface before they cause audience drop-off.

Iterative Improvement Cadences

Annual refreshes aren’t fast enough. High-priority content needs monthly review, the rest quarterly or twice a year.

Too many updates at once jam teams up; something older always gets ignored even if it still drives results.

Frameworks for Balancing Automation and Human Expertise

Balancing automation with human oversight is essential in enterprise SEO. Get it wrong and either you burn cash on manual effort or compromise your brand with unchecked automation.

These frameworks keep both quality and speed in check.

Decision Trees for Content Automation Versus Manual Input

Decision trees only help if you’re realistic about what automation can handle.

I make sure regulatory, legal, or anything that creates brand or liability risk goes straight to manual review. Teams that skip this get burned—public corrections, retraining, fallout.

Use automation for basic work like product descriptions. Anything nuanced or compliance-related gets human review or a hybrid. If you don’t keep auditing your rules as scale and complexity increase, mistakes start compounding fast.

Algorithmic Content Generation: Use Cases and Guardrails

Generative AI is fast, but if you use it without tight limits, you end up with keyword stuffing, duplicate content, or noise.

Keep these tools to template-based, fact-bound use cases: meta, location pages, FAQs. Every batch gets sampled by humans. Skipping this once led to a small error spreading to thousands of URLs before anyone noticed. Without boundaries and anomaly tracking, you lose control.

Hybrid Editorial Models in Practice

Most teams arrive at a hybrid model after failing with pure automation.

You increase output on simple formats if you let algorithms draft and editors fix for context and voice. But if editors treat the AI draft as final, errors multiply and brand tone drifts. I require real editing—rewrite or annotate, no pass-through proofreading.

Maintaining Creativity Amidst Scale Pressures

Pushing volume kills creativity. If you don’t block time for unique features or original campaigns, content slips into a rut.

I set a quota: at least 20% of the calendar must feature exclusive interviews, data, or custom angles. Skip brainstorming and the drop in repeat readership happens within months. Measuring and enforcing this is the only way it sticks.

Human-in-the-Loop Safeguards

Checkpoints aren’t for show—they catch what automation doesn’t: compliance risks, brand drift, outlier errors.

Minimum: review by author, pre-publish check by someone else, and a post-launch audit by an independent team. Content tied to compliance or breaking news always gets a second look. Neglect this and incident fallout doubles. At scale, peer review and clear stop-the-line authority are necessary to stop mistakes from piling up.

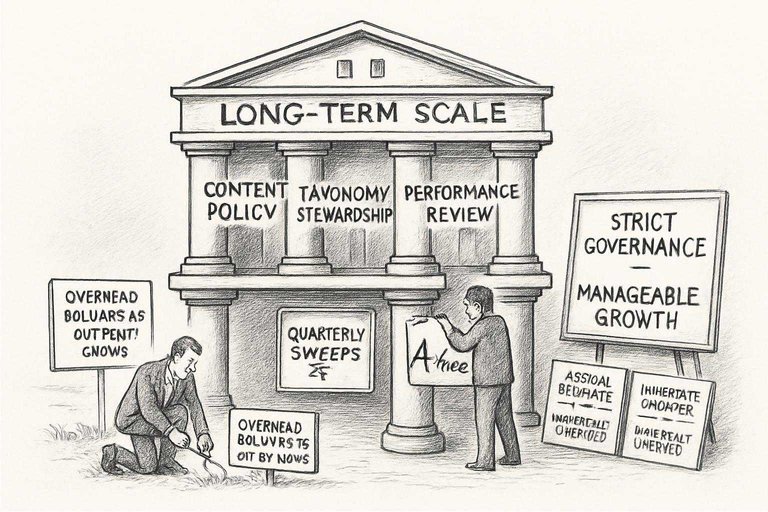

Governance Structures That Sustain Long-Term Scale

As output grows, overhead balloons. If you wait for signs of chaos, cleaning up takes a long time. Strict governance from the start is far easier and cheaper than pulling weeds after they root across thousands of pages.

These practices keep growth manageable.

Content Policy Frameworks for Risk Management

Content policies only work if they’re practical and right there in the workflow. Tie rules to examples: banned phrases or images, DMCA triggers—make it explicit.

Update policies and checklists immediately after any incident; don’t wait for review cycles. Delays invite repeats.

Taxonomy Ownership and Stewardship Models

No clear taxonomy owner? You’ll get duplicate or orphaned categories—every time.

- Assign someone from SEO or product as central manager

- Set up subject stewards

- Anyone can propose, but approval and regular cleanups are non-negotiable

Skip quarterly audits and your site’s structure will fall apart in months. Someone has to be able to say “no.”

Performance Review and Rollback Protocols

You can’t ignore underperforming content. I run quarterly sweeps for traffic and conversion drops.

If a page falls below a threshold twice in a row, it gets updated, redirected, or deindexed. Teams often hesitate to remove dead content. This just drags rankings down, with long-term impact.

Cross-Department Collaboration Patterns

SEO, legal, and brand teams need forced, regular sync. Slack threads and email updates get ignored.

Set up mandatory meetings and real-time dashboards for all content and changes. Skipping this has led to outdated or non-compliant releases that needed emergency correction. Visibility in real time is necessary to avoid these mistakes.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: