January 25, 2026

·

9 min read

When Traffic Estimates Don’t Match Reality

Anyone managing search traffic has hit the wall where keyword tool estimates and actual analytics don’t add up.

Ahrefs might claim a term pulls 2,000 visits a month, but Google Analytics shows 200. Relying on those numbers leads to wasted time and budget. Figuring out these mismatches early saves effort.

Here’s what to look for, why estimates diverge, and how to double-check before making big calls.

Common Signs: Sudden Drops or Spikes in Predicted Search Vol

If you track keywords week to week, you’ll see terms drop 60% overnight for no apparent reason. Some teams change strategy off these swings.

But if your search console shows steady numbers, it’s just a tool algorithm shift, not user behavior. Use these drastic changes as a cue to check multiple data sources. Wild tool-only swings are almost always noise.

Root Cause: Divergent Clickstream Data Sources

Each tool uses different data pipelines. Ahrefs pulls from traffic panels, KWFinder gathers from browser extensions. Their models fill gaps with various algorithms. You can see 2x differences between tools for the same keyword. There’s no correct number.

- Get a ballpark by comparing multiple sources.

- When stakeholders want a forecast, make it clear the figure is approximate, not precise.

Real-World Results: Campaigns Built on Misleading Traffic

Teams that go all-in on tool volume get burned. I’ve seen conversions drop 30% after launching content for terms that looked good in keyword tools, but didn’t bring real traffic.

A keyword that tools rate as low-volume can turn out as a top performer if intent is underestimated. Match projections with analytics after a campaign. If traffic isn’t close to predicted three months in, it’s a data issue, not just content quality.

How to Cross-Check: Layering Third-Party Verification

Single-tool reliance misses too much. I put estimates from several tools like Ahrefs, SEMrush, and Keyword Planner side by side and look for a range, not a point.

If two out of three agree, it’s probably close. Google Trends won’t give counts, but shows if the topic has long-term traction.

The real test is your analytics—if traffic stays off from projections, revise your model. Don’t just blame the tools.

When Keyword Difficulty Scores Send You Down the Wrong Path

Anyone who’s run live tests against keyword difficulty ratings knows they’re often wrong. ‘Easy’ keywords sometimes mean old domains with heavy link profiles. ‘Hard’ scores show up on new terms with little defense. The scores are a filter, not a decision. Follow them blindly and you waste effort fighting the wrong battles.

Symptom: Mismatch Between Predicted and Actual SERP Competitiveness

Rookies sort by ‘easy’ and publish without manual checks. These scores mostly use backlink counts and domain stats, but skip brand dominance, SERP features, or update recency. I’ve chased keywords labeled ‘easy’ only to find the top 4 slots locked by Wikipedia and government sites for over a year. Some ‘hard’ results just need one strong article and fall fast. Manual SERP review is essential. Skipping it means losing time and burning resources. For more context, see how different SEO tools produce varying keyword difficulty scores and why it’s important to understand their methodology.

When Search Intent Classifications Lead You Astray

Platforms like Ahrefs and KWFinder often mislabel intent for keywords. These are algorithmic guesses, not facts.

I’ve watched campaigns waste thousands targeting keywords listed as informational. Traffic poured in, but conversions were awful—the label didn’t match how Google ranked content for that query.

Relying only on these intent tags can cause major problems, especially at scale.

Warning Sign: Content Misses User Expectations Despite High Volume

One common problem: chasing ‘high-volume informational’ terms, publishing exhaustive guides, and then seeing 80% bounce rates with users gone in under 20 seconds. That shows your content doesn’t match what searchers or Google actually want.

This happens a lot with keywords like ‘running shoes’ or ‘software reviews’—tools call them informational, but the SERP is all product cards and tables. Ignore the live search results and you can burn months on work nobody wants.

Underlying Problem: Varying Interpretations of Intent Signal

Each tool decodes SERP features its own way. I’ve seen keywords like “credit card reviews” flagged as informational one week and commercial the next, depending on which content types were ranking when the tool crawled.

Google’s results shift frequently, especially for YMYL queries—with intent-type changes occurring as often as twice in a single week for high-volume terms. Tool models can’t keep up with that kind of volatility—they only reflect what was visible at the last crawl, not what’s happening now.

Business Fallout: Ineffective Content That Misses the Mark

If you take intent scores at face value, KPIs suffer. Bounce rates go up, engagement craters, and you fill your site with content no one uses. That hits conversions.

Less obviously, morale drops—teams stuck with low-performing work lose speed and confidence, and you burn more budget per lead.

How to Validate: Manual SERP Reviews Before Commitment

Before committing to big content builds, I always check the live Google SERP for target keywords. Five minutes in incognito is usually enough. Look at the top ten: see if they’re guides, products, or comparisons. This has saved me from investing in the wrong format. Manual checks catch intent mismatches that tools miss, especially for volatile topics or when Google changes the SERP layout.

What Happens When SERP Data Lags or Skews

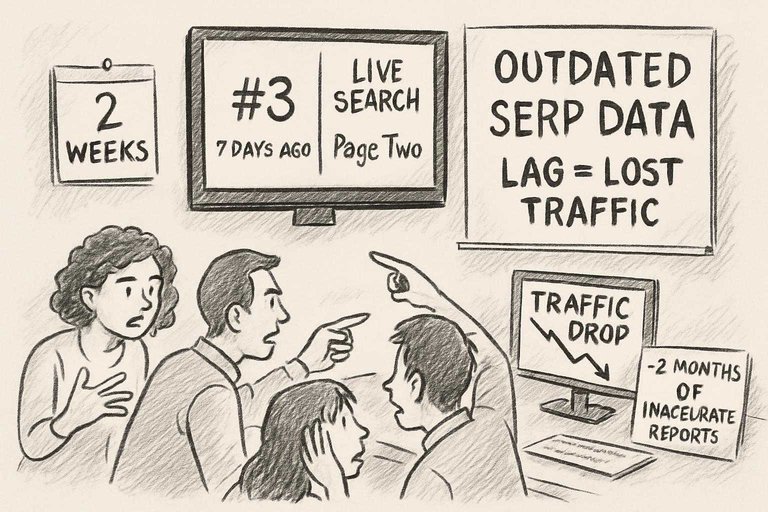

SEO tools rely on how recently they crawled Google. Relying on these snapshots without considering data age can waste weeks. I’ve regularly seen tool-reported rankings lag by up to seven days. In fast-moving niches, that gap can cost you traffic to new competitors before you even notice. For a deeper look at how these issues play out in real situations, see these real-world examples of automated SEO content where delayed SERP data significantly impacted strategy.

Symptom: Outdated or Inconsistent Ranking Snapshots

If your tool shows you ranking #3 but a live incognito search says you’re on page two, you’re seeing a delay in action. I’ve watched this out-of-date ranking stick around for up to two weeks during Google updates. Teams relying on these numbers end up optimizing for the wrong targets. Sometimes, they don’t spot new competitors until traffic drops.

Technical Explanation: Crawling Frequency and Indexing Gaps

Ahrefs and KWFinder crawl Google on their own schedules—sometimes every few days, sometimes less. For news or trending topics, that’s not enough; I’ve seen Ahrefs completely miss surge and drop patterns in active SERPs.

Regional mismatches happen too—if your tool crawls from the US but your audience is elsewhere, the rankings won’t line up. These aren’t errors, just lagging data—assume all tool data is at least somewhat behind what’s live. You can learn more about how Ahrefs collects and updates its keyword and search traffic metrics to understand the pace and limitations of SERP tracking.

Operational Cost: Pursuing Strategies Based on Old Data

Working off stale data can mean spending a month on keywords that have already fallen out of the top results. Agencies send reports showing progress that isn’t real because they’re behind by a couple of crawl updates.

Clients frequently withdraw or cut spending after seeing just two consecutive months of inaccurate or outdated reporting. By the time you have up-to-date numbers, your earlier strategy is already off and needs rework.

Keeping Data Current: Setting Up External SERP Alerts

I’ve started using browser plugins and outside services with near-daily crawling for high-value queries. These alert me to changes outside normal crawl cycles. It’s extra effort, but closes the data gap—crucial around big updates or campaign launches.

Misalignments in Metric Definitions That Derail Decisions

Pulling ‘search volume’ or ‘CPC’ from Ahrefs and KWFinder for the same keyword rarely gives you the same numbers. The difference isn’t random. Each tool uses its own formulas and definitions. Treating these as interchangeable leads to wasted ad spend or chasing the wrong keywords while thinking you’ve got the right answer. To alleviate such issues, it’s helpful to reference top resources to simplify SEO workflows which can guide your approach for more consistent, reliable data use.

Red Flag: Tracking Discrepancies Across Keyword Tools

Sometimes one tool shows double the search volume for a keyword compared to another. Usually, that’s because of averaging methods—Ahrefs may use a 12-month average, KWFinder might just look at last month or the last quarter.

CPC’s worse. I’ve seen KWFinder list CPC 40% higher because of slow updates from Google Ads. Plugging these numbers directly into spreadsheets doesn’t give useful forecasts until you confirm how they were generated.

How Definitions Shift: Hidden Calculation Differences

Tools default to different markets. Ahrefs might give you US-only data, KWFinder might pull global. If you miss that, benchmarks are worthless.

Then there’s aggregation: singular and plural grouped together in one tool, split in another. Looks like an error, but it’s just how each tool is built. Unless you check the docs and run side-by-side tests, you’ll waste time explaining “spikes” that are just quirks of the software.

Compounding Effects: Inconsistent Reporting Across Teams

One team uses KWFinder. Another uses Ahrefs. At review time the numbers never align.

I’ve watched teams burn entire sprints reconciling mismatches instead of fixing campaigns. Worse, leadership can’t trust the budget or prioritize properly if everyone swaps data sources halfway through.

Standardizing Process: Building a Keyword Data Playbook

I use playbooks breaking down tool sources, metric definitions, data freshness, and region. Unless everyone pulls (for example) US, 12-month average from Ahrefs for every report, trend lines and ROI models are just guesses.

Bring on someone new without this and they’ll spend weeks fixing data instead of analyzing it. Standardizing is practical, not just paperwork.

Written by

Skribra

This article was crafted with AI-powered content generation. Skribra creates SEO-optimized articles that rank.

Share: